Hello Engineering Leaders and AI Enthusiasts!

This newsletter brings you the latest AI updates in just 4 minutes! Dive in for a quick summary of everything important that happened in AI over the last week.

And a huge shoutout to our amazing readers. We appreciate you😊

In today’s edition:

💰 Cursor’s AI matches GPT at 10x lower cost

⚡ Anthropic surveys 81k people to study AI hopes, fears

🧠 Alibaba’s AI model can now hear, watch, and clone your voice

🖥️ MiniMax unveils self-evolving AI model

💡Knowledge Nugget: AI Gets Cheaper. Your AI Bill Doesn’t by Kyle Tsai

Let’s go!

Cursor’s AI matches GPT at 10x lower cost

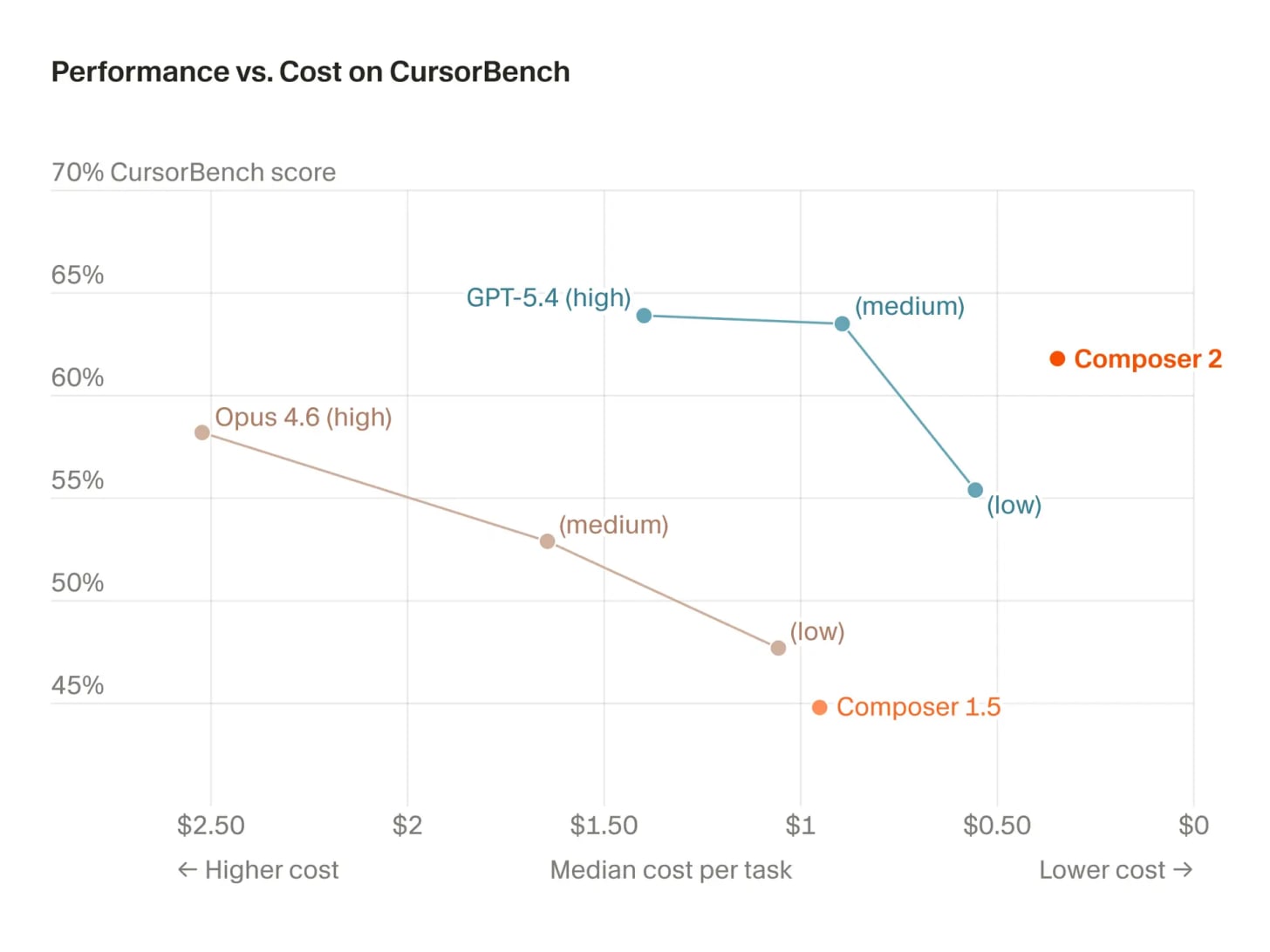

Anysphere has launched Composer 2, its latest in-house coding model powering the Cursor editor and it’s punching well above its weight. The model outperforms Anthropic’s Opus 4.6 on independent coding benchmarks and comes within striking distance of GPT-5.4, positioning it among the top-tier coding AIs.

Composer 2 runs at roughly 1/10th the price of GPT-5.4 and 1/20th of Opus, while delivering comparable performance. Across three rapid iterations, Cursor’s models have improved significantly, signaling how quickly smaller, focused players are closing the gap with frontier AI labs.

Why does it matter?

Cursor has quickly moved from relying on frontier models to building one that competes at a fraction of the cost. Closing the gap this fast as an application-layer company is notable, and Composer 2’s pricing could force developers to rethink paying premium rates for models like GPT-5.4 or Opus 4.6.

Anthropic surveys 81k people to study AI hopes, fears

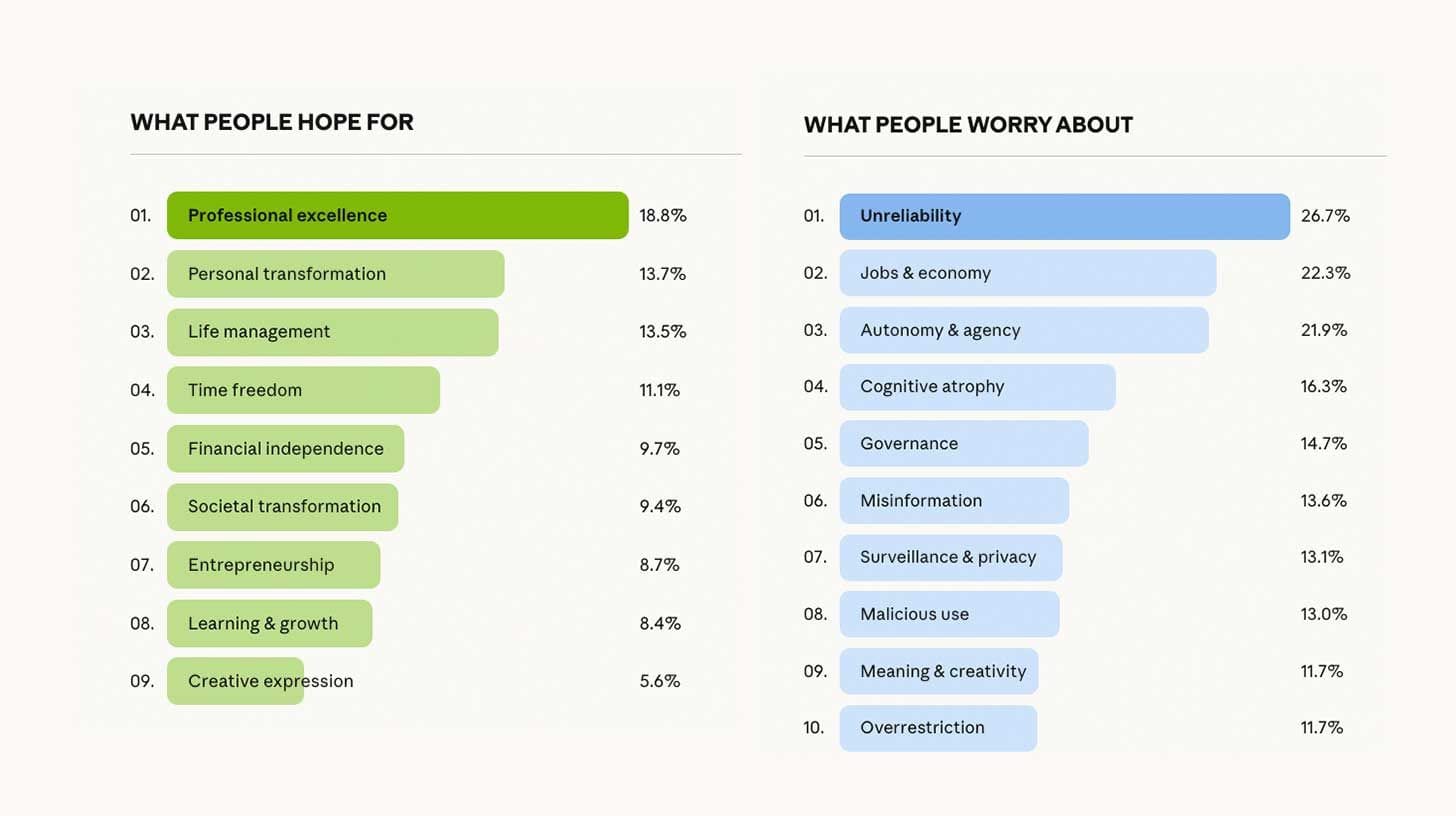

Anthropic has released one of the largest AI sentiment studies to date, using its own model, Claude, to interview over 81,000 users across 159 countries. The conversations were conducted in 70 languages, focusing on how people feel about AI’s future, what excites them and what worries them.

The results show a clear split: most users are optimistic about AI improving productivity, financial freedom, and daily life management. But concerns remain strong, with fear of AI making mistakes topping the list, alongside job loss, over-reliance, and losing control. Sentiment also varies globally, with regions like India showing higher optimism compared to more cautious views in the US and Europe.

Why does it matter?

AI sentiment often appears flat in traditional surveys, but this study captures the depth and complexity behind those views. It also shows how AI can now be used to conduct open-ended, large-scale qualitative research in the ways that weren’t possible a year ago.

Alibaba’s AI model can now hear, watch, and clone your voice

Alibaba has launched Qwen3.5-Omni, a fully multimodal AI model that can natively process text, images, audio, and video, all in real time. The model combines strong reasoning with interaction, achieving 215 state-of-the-art benchmarks and introducing features like script-level video understanding and human-like voice conversations with interruption support.

The standout feature is “Audio-Visual Vibe Coding.” Users can simply describe what they want through a camera, and the model generates functional outputs like websites or games instantly. It also supports 70+ languages and can autonomously decide when to fetch real-time data or call external tools, pushing it closer to independent AI systems.

Why does it matter?

Multimodal AI has been inevitable, but Qwen shows how fast it’s becoming the default. As models start seeing, hearing, and acting in real time, chat-based interfaces begin to feel limiting, pushing developers toward building full-stack, AI-driven experiences instead.

MiniMax unveils self-evolving AI model

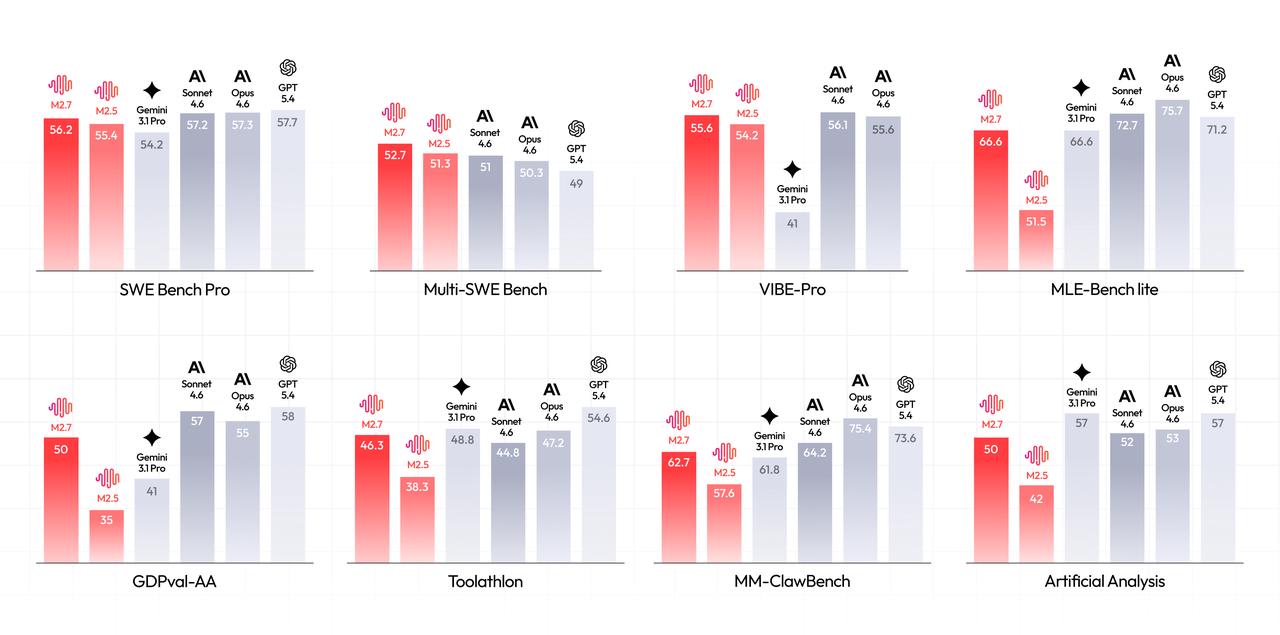

MiniMax has introduced M2.7, a model designed to actively participate in its own training process. Instead of relying solely on human-led updates, the model writes its own improvement routines, analyzes its mistakes, and refines how it learns through repeated feedback loops.

Across 100+ autonomous improvement cycles, M2.7 boosted its accuracy by around 30% and achieved competitive scores on coding benchmarks, putting it close to top-tier models from leading AI lab.

Why does it matter?

Self-evolving AI has been an expected next step, with signals from OpenAI, Anthropic, and others, but MiniMax is among the first to explicitly demonstrate it in practice. Models that can improve themselves may soon become the default, but for now, this is still an early glimpse of that shift.

Enjoying the latest AI updates?

Refer your pals to subscribe to our newsletter and get exclusive access to 400+ game-changing AI tools.

When you use the referral link above or the “Share” button on any post, you’ll get the credit for any new subscribers. All you need to do is send the link via text or email or share it on social media with friends.

Knowledge Nugget: AI Gets Cheaper. Your AI Bill Doesn’t

In this article, author Kyle Tsai highlights a growing paradox in AI economics: while tokens are getting cheaper, total AI spend is rising. As models evolve they reason through problems using multi-step processes like sub-agents, iterations, and self-critique. A single prompt can now trigger multiple layers of computation, dramatically increasing token usage behind the scenes. In some enterprise workflows, this can lead to 500× to 10,000× higher token consumption compared to direct responses.

This shift introduces what the author calls the “thinking tax”- a structural cost of reasoning-heavy AI. Unlike traditional workloads, these costs are non-deterministic. The same task can vary wildly in compute usage depending on how the model processes it internally. Combined with multi-agent orchestration and dynamic pricing tiers, this makes AI spend difficult to predict, model, or control before execution

Why does it matter?

Lower AI costs are driving higher usage, not lower spend. Costs now depend on how much the model “thinks,” making them inherently unpredictable. The real challenge shifts to forecasting and controlling AI spend before execution, not after.

What Else Is Happening❗

⚡ Google Research introduced TurboQuant, an algorithm that compresses AI memory 6× and boosts speed up to 8× on H100 chips with near-zero accuracy loss.

🧩 ARC Prize Foundation released ARC-AGI-3, a new reasoning benchmark where humans solve tasks easily but top AI models score under 1%, exposing major gaps in general intelligence.

⚖️ A Stanford study finds major AI chatbots often side with users in conflicts even when they’re wrong reinforcing self-righteous behavior and reducing willingness to reconsider.

🖥️ Anthropic previewed a feature giving Claude control of your desktop, letting it click, type, and complete tasks across apps remotely via mobile-triggered commands.

🎨 Luma AI launched Uni-1, an image model that processes text and visuals in a unified pipeline, delivering strong creative control and competitive pricing.

🖼️ Microsoft released MAI-Image-2, a text-to-image model ranking No. 5 on the Arena leaderboard with major gains in photorealism and text rendering.

🏭 Mistral launched Forge, a platform letting enterprises build custom AI models on proprietary data using full training pipelines without sharing data externally.

💻 Manus launched My Computer, a desktop app that brings its AI agent locally to manage files, run terminal commands, and automate tasks directly on users’ machines.

🗺️ Google upgraded Maps with Gemini, adding Ask Maps for conversational trip planning and Immersive Navigation with 3D route visualization and real-time guidance.

New to the newsletter?

The AI Edge keeps engineering leaders & AI enthusiasts like you on the cutting edge of AI. From machine learning to ChatGPT to generative AI and large language models, we break down the latest AI developments and how you can apply them in your work.

Thanks for reading, and see you next week! 😊

Read More in The AI Edge