Hello Engineering Leaders and AI Enthusiasts!

This newsletter brings you the latest AI updates in just 4 minutes! Dive in for a quick summary of everything important that happened in AI over the last week.

And a huge shoutout to our amazing readers. We appreciate you😊

In today’s edition:

🤖 Z AI’s GLM-5.1 model beats GPT in coding

🌐 Google Launches Gemma 4 Open Models

⚡ Microsoft drops faster, cheaper AI models

📊️ Stanford’s report finds AI hits 53% adoption, trust at 31%

💡Knowledge Nugget: AI Infrastructure Roadmap: Five frontiers for 2026 by Janelle Teng Wade

Let’s go!

Z AI’s GLM-5.1 model beats GPT in coding

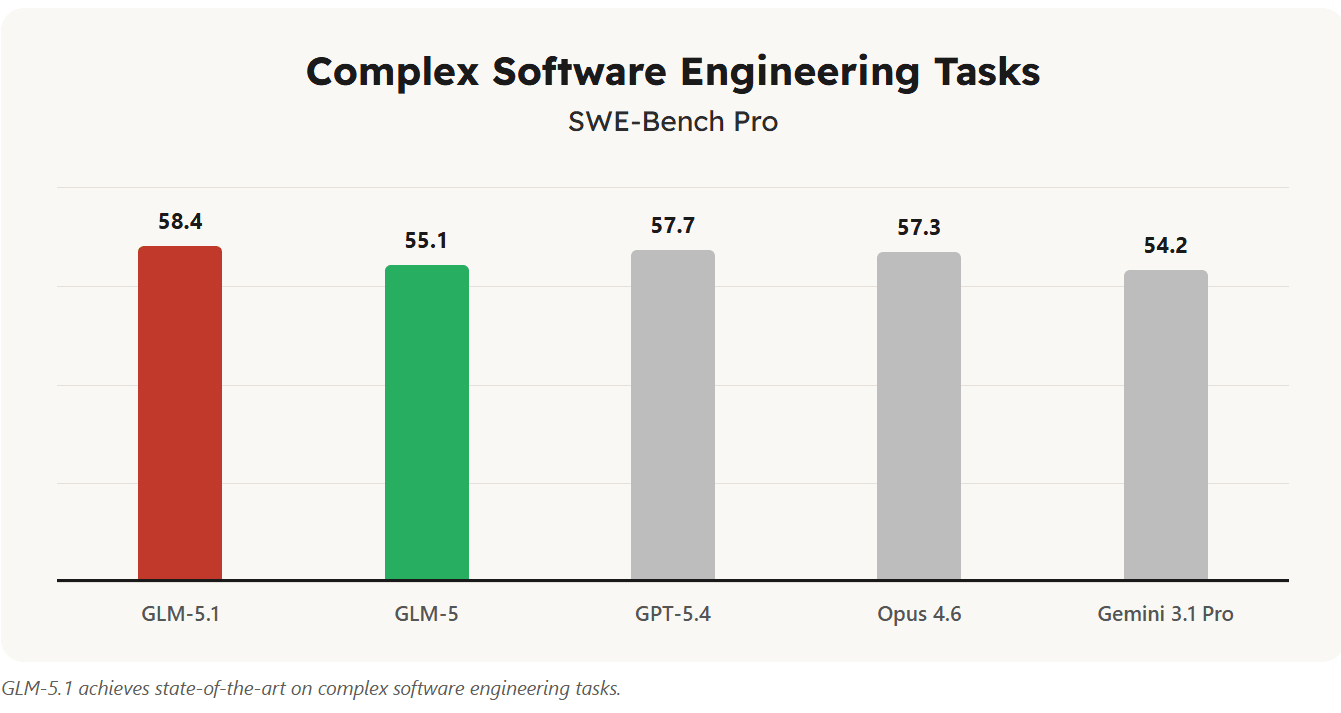

Z AI has released GLM-5.1, a new open-source coding model that is competing directly with top-tier systems and even outperforming them on key benchmarks. The model scored higher than GPT-5.4 and Opus 4.6 on SWE-Bench Pro, marking a rare moment where an open model leads the leaderboard.

Beyond benchmarks, GLM-5.1 is built for long-running, autonomous tasks. In testing, it completed an 8-hour coding session to build a full Linux desktop experience as a web app handling everything from file systems to terminal interactions without human input.

Why does it matter?

Chinese labs continue to push forward, with GLM-5.1 combining strong coding performance with long-horizon task capabilities. Instead of just solving short prompts, models are now handling complex tasks over hours without breaking, something that wasn’t possible before. And with an open model now outperforming GPT-5.4 on benchmarks, the gap between open-source and top closed models is shrinking fast.

Google launches Gemma 4 open models

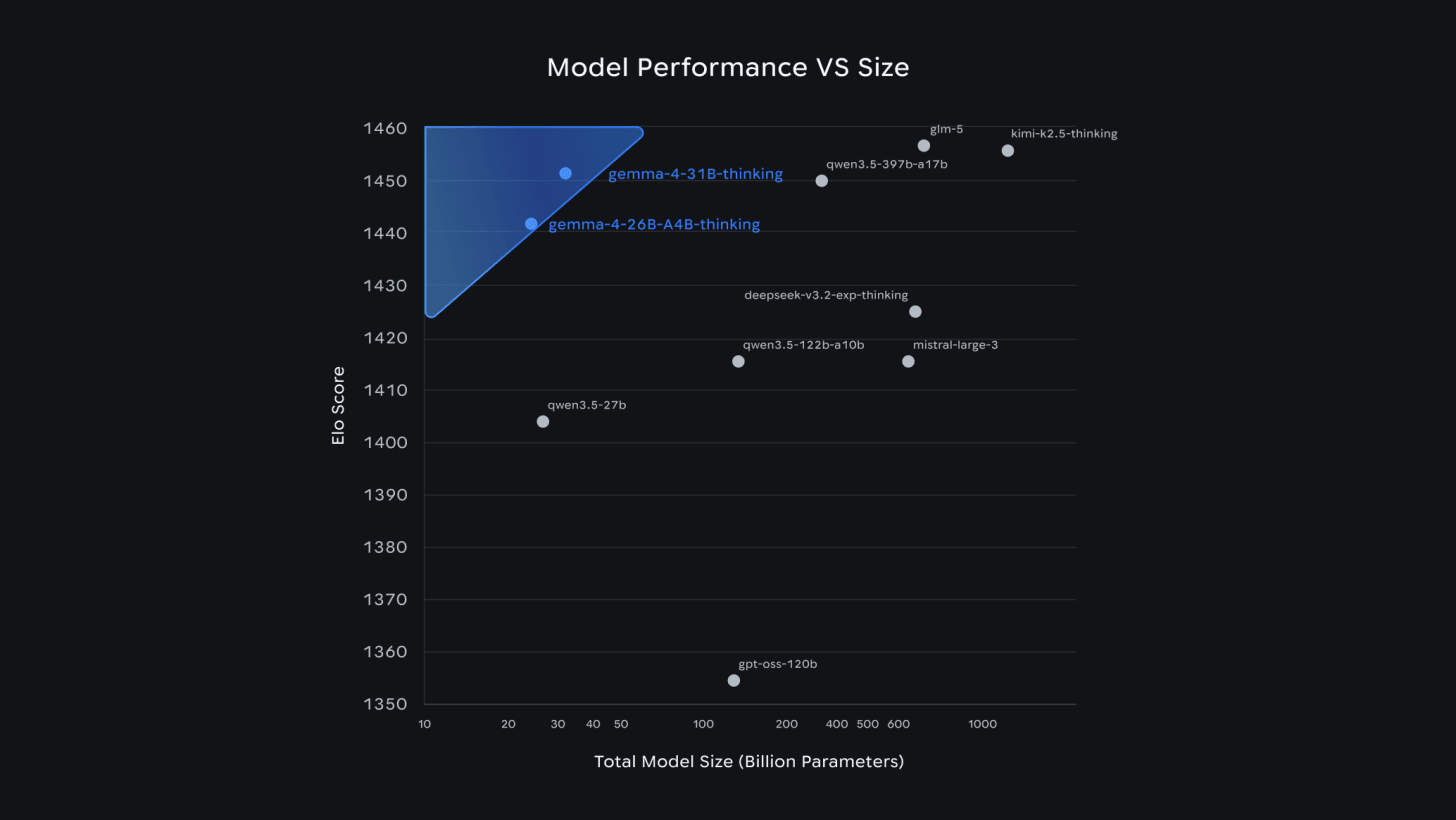

Google DeepMind has released Gemma 4, a new family of open AI models designed to run across everything from smartphones to full-scale systems. The models support coding, vision, and multi-step agent workflows, with smaller variants even capable of running offline with voice support.

Performance-wise, the larger Gemma 4 models are competitive with leading open models, despite being smaller in size. But the bigger shift is licensing, Google has moved to Apache 2.0, allowing developers to freely modify, deploy, and commercialize the models without restrictions.

Why does it matter?

Open models have largely been led by Chinese labs, but Gemma 4 signals a stronger push from the U.S. side to compete on openness. Google moving to a fully permissive license stands in contrast to others tightening control, suggesting the next phase of competition may be shaped as much by licensing as by model performance.

Microsoft drops faster, cheaper AI models

Microsoft has introduced three new MAI models across speech, voice, and image generation, aiming to compete on speed, quality, and price. The lineup includes Transcribe-1 for speech-to-text, Voice-1 for realistic voice generation, and Image-2 for high-quality visuals, all available through Microsoft Foundry.

The models are designed for real-world performance. Transcribe-1 delivers faster and more accurate transcription across multiple languages; Voice-1 can generate expressive speech and clone voices from short samples, and Image-2 produces visuals up to 2× faster. The bigger push is clear: enterprise-ready AI models that balance performance with aggressive pricing.

Why does it matter?

When every modality becomes cheap and fast together, building multimodal AI stops being a premium capability and becomes baseline. That shift puts pressure on competitors and forces developers to optimize less for capability and more for cost, scale, and integration.

Stanford’s report finds AI hits 53% adoption, trust at 31%

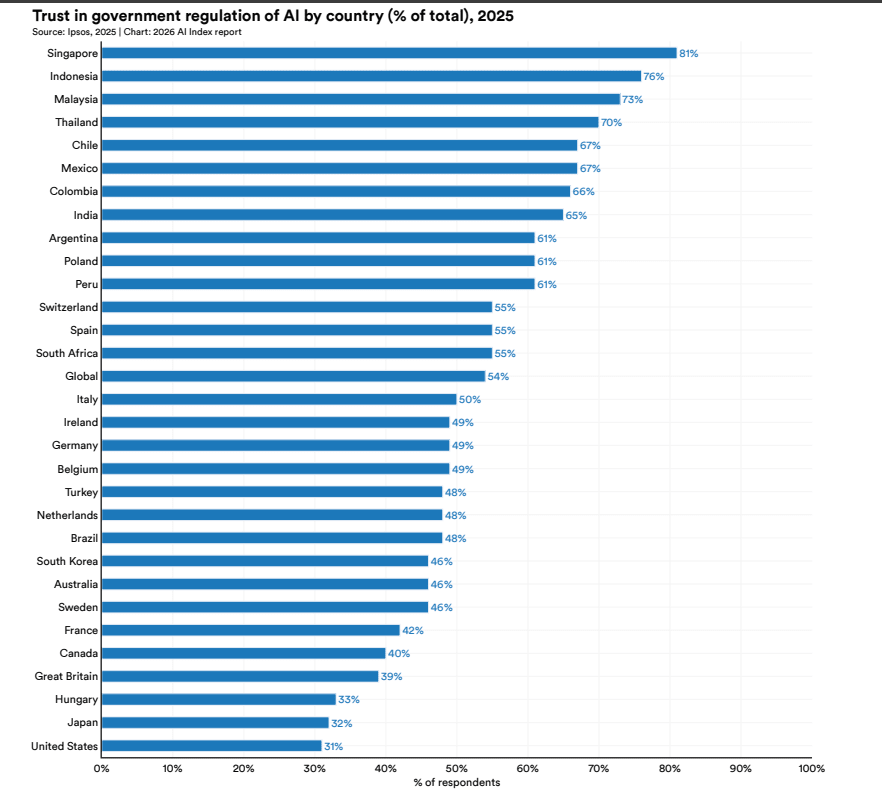

Stanford’s AI Index Report 2026 shows that AI has now reached over half the global population faster than both the PC and the internet. But while usage is surging, sentiment is moving in the opposite direction.

The gap is widening nearly 75% of AI experts are optimistic about jobs, but only 23% of the public agrees. Entry-level developer jobs (ages 22–25) have already dropped ~20% since 2024, even as demand for senior engineers grows. Meanwhile, the U.S. leads in building AI but ranks just 24th in adoption (28.3%), with regions like Southeast Asia pulling ahead.

Why does it matter?

This is just a snapshot from a much larger report, but the expert–public divide stands out. AI insiders see productivity gains and long-term upside, while public sentiment is moving the other way, with trust falling and job fears rising. That disconnect, combined with just 31% trusting institutions to manage AI, feels like the kind of gap that doesn’t stay theoretical for long.

Enjoying the latest AI updates?

Refer your pals to subscribe to our newsletter and get exclusive access to 400+ game-changing AI tools.

When you use the referral link above or the “Share” button on any post, you’ll get the credit for any new subscribers. All you need to do is send the link via text or email or share it on social media with friends.

Knowledge Nugget: AI Infrastructure Roadmap: Five frontiers for 2026

In this article Janelle Teng Wade outline how AI infrastructure is entering a new phase. The first wave focused on building powerful models- bigger weights, more data, and better benchmarks. But now, the focus is shifting toward making AI systems work in real-world environments. As enterprises move from experiments to production, infrastructure must evolve to support memory, context awareness, continuous learning, and efficient deployment at scale.

They identify five key frontiers driving this shift:

-

systems that “harness” models with better memory and observability,

-

continual learning that allows models to improve after deployment,

-

reinforcement learning platforms for real-world decision-making,

-

inference optimization as usage scales, and

-

world models that simulate physical environments.

Together, these address the gap between impressive demos and reliable, production-ready AI systems.

Why does it matter?

Model performance alone is no longer enough to deliver consistent outcomes in production. Like early cloud computing, the real value will come from the infrastructure that makes AI reliable, adaptive, and scalable. The companies that solve this layer will define how AI actually gets deployed across industries.

What Else Is Happening❗

🏪 Andon Labs deployed Luna, an AI agent running a real retail shop with a $100K budget handling hiring, operations, and decision-making as a fully autonomous “AI employer.”

❤️ Researchers at the University of Oxford developed an AI that detects hidden heart risk from CT scans, predicting heart failure up to 5 years in advance with 86% accuracy.

💰 Perplexity added Plaid integration to its Computer agent, turning it into a personal finance hub that connects accounts and auto-builds budgets, trackers, and financial plans.

🎭 HeyGen launched Avatar V, an ultra-realistic AI avatar model that captures identity from a 15-second video and prevents visual drift over time.

🧠 Meta’s Superintelligence Labs debuted Muse Spark, a multimodal reasoning model competitive with frontier systems, marking the first major release from Alexandr Wang’s division.

🛡️ Anthropic launched Project Glasswing, a cybersecurity coalition powered by its unreleased Mythos model, which uncovered thousands of critical vulnerabilities across major systems.

📜 OpenAI published a policy blueprint for a superintelligent future, proposing ideas like AI profit taxes, a public wealth fund, and a potential shift to a 4-day workweek.

🎬 Netflix released VOID, an open-source video tool that removes objects while realistically adjusting scene physics, outperforming traditional inpainting methods.

🧑🏭 OpenAI’s “Project Stagecraft” is reportedly paying freelancers $50/hr to create job-specific training data, mapping real-world workflows to improve AI capabilities.

New to the newsletter?

The AI Edge keeps engineering leaders & AI enthusiasts like you on the cutting edge of AI. From machine learning to ChatGPT to generative AI and large language models, we break down the latest AI developments and how you can apply them in your work.

Thanks for reading, and see you next week! 😊

Read More in The AI Edge