Hello Engineering Leaders and AI Enthusiasts!

This newsletter brings you the latest AI updates in just 4 minutes! Dive in for a quick summary of everything important that happened in AI over the last week.

And a huge shoutout to our amazing readers. We appreciate you😊

In today’s edition:

💸 Ernie 5.1 challenges top AI at 94% lower pre-training cost

🎙️ OpenAI advances voice AI with new API models

⚡ Thinking Machines Lab unveils real-time AI interaction models

📈 Anthropic beats OpenAI on business adoption

💡 Knowledge Nugget: The AI Productivity Scorecard Is Broken by Ravinder

Let’s go!

Ernie 5.1 challenges top AI at 94% lower pre-training cost

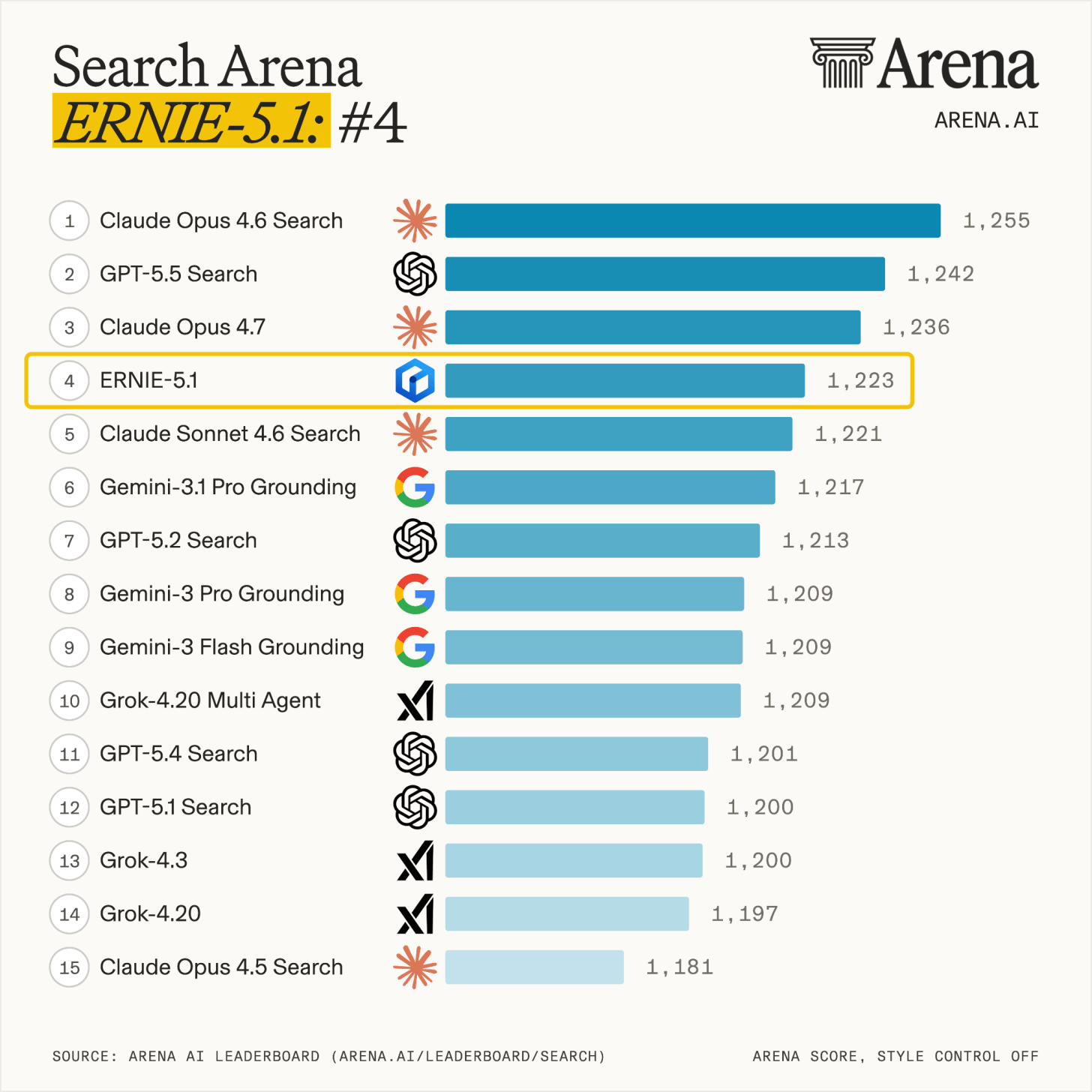

Baidu released Ernie 5.1, a leaner version of its Ernie 5.0 model that uses roughly one-third of the parameters and significantly lower compute costs while still competing with top frontier systems. Baidu says the model ranks fourth globally on the Arena Search Leaderboard and outperforms DeepSeek-V4-Pro on several autonomous AI agent benchmarks.

The company credits the gains to a new “Once-For-All” training framework that trains multiple model sizes in a single run, reducing pre-training costs to just 6% of comparable models. Baidu also rebuilt its reinforcement learning infrastructure to scale different training components independently, helping improve efficiency and reduce model drift during training.

Why does it matter?

Baidu is proving that frontier-level AI performance no longer requires frontier-level compute spending. If these efficiency claims hold up, labs chasing ever-larger models may start looking like companies building bigger data centers instead of better AI systems.

OpenAI advances voice AI with new API models

OpenAI launched GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper- a new suite of API voice models designed for live AI conversations and speech-based agents. The upgrades bring faster reasoning, streaming responses, multi-tool usage, and more natural voice interactions to real-time AI systems.

The flagship Realtime-2 model can “talk while thinking,” use multiple tools simultaneously, and respond with improved tone and realism. OpenAI also introduced live translation across 70+ languages and a streaming transcription model, giving developers a full stack for building AI-powered voice experiences. Companies like Zillow and Priceline are already using the technology for customer-facing AI agents.

Why does it matter?

AI voice is moving past rigid turn-based interactions. OpenAI’s latest model points to a future where AI can reason, use tools, and complete tasks through seamless conversation. While the industry focuses on text agents, the next wave of AI will be spoken to, not typed at.

TML unveils real-time AI interaction models

Thinking Machines Lab introduced a research preview of “interaction models,” a new AI system designed to collaborate with users live across voice, video, and text. Instead of waiting for prompts and replies like traditional chatbots, the model operates in a continuous streaming loop, processing conversations and visual inputs in near real time.

The system can handle interruptions, react to visual changes, translate live speech, and even continue talking while a secondary background model performs slower reasoning, searches, or tool-based tasks. CEO Mira Murati said the company’s focus is not just smarter AI, but building systems that work more naturally alongside humans.

Why does it matter?

TML has kept a low profile since launch, but interaction models give it something most labs don’t have: a design philosophy built around how humans actually collaborate, not how long a model can operate without one. The real test now is whether that’s a lasting edge or just a gap the bigger players close in their next release.

Anthropic beats OpenAI on business adoption

Ramp’s latest AI Index shows Anthropic surpassing OpenAI in paid business adoption for the first time. Anthropic climbed to 34.4% adoption across tracked U.S. businesses, while OpenAI dropped to 32.3%, even as overall enterprise AI usage continued rising.

Ramp says Claude Code helped fuel much of the momentum, with Anthropic expanding beyond engineering teams into finance, legal, and research workflows. The report is based on AI-related spending across 50K+ businesses, making it one of the clearest signals yet of where enterprises are putting money behind AI adoption.

Why does it matter?

OpenAI is far from losing ground. Ramp likely doesn’t reflect many major enterprise deals, while ChatGPT continues to hold a strong lead on the consumer side. The bigger picture lies in the long-term trajectory, likely the same signal behind Fidji Simo’s enterprise-first shift at OpenAI, now showing up through launches like Codex and other enterprise-focused moves.

Enjoying the latest AI updates?

Refer your pals to subscribe to our newsletter and get exclusive access to 400+ game-changing AI tools.

When you use the referral link above or the “Share” button on any post, you’ll get the credit for any new subscribers. All you need to do is send the link via text or email or share it on social media with friends.

Knowledge Nugget: The AI Productivity Scorecard Is Broken

In this sharp piece, Ravinder argues that most companies are using the wrong scorecards to measure AI productivity. Metrics like PR volume, lines of code, Copilot adoption, or “time saved” only capture output velocity, not whether engineering quality actually improved.

The bigger shift is that AI changed the developer’s job itself. Engineers are spending less time writing code and more time validating AI-generated output, reviewing subtle mistakes, and correcting polished-looking but flawed logic. Traditional productivity metrics were never designed for that workflow.

The author suggests tracking newer signals instead:

-

How much AI-generated code ships without major edits

-

Whether AI-created PRs increase review overhead

-

If AI introduces different classes of bugs

-

Whether AI-generated tests are just validating AI-generated assumptions

Why does it matter?

Right now, many teams are mistaking acceleration for productivity. Shipping more code faster means very little if review burden, technical debt, and subtle defects rise underneath. AI is making engineering output easier to generate, but harder to trust, and most dashboards aren’t measuring that gap.

What Else Is Happening❗

🛒 Amazon merged shopping chatbot Rufus into Alexa for Shopping, creating an AI assistant that remembers purchases, compares products, tracks prices, and can auto-buy across stores.

➗ Google DeepMind unveiled an AI co-mathematician based on Gemini 3.1, using agent teams and parallel reasoning workflows to tackle unsolved research-level math problems.

🏃 Google opened its Gemini-powered AI health coach to the public, pairing it with a new screenless Fitbit tracker and a unified Google Health data hub.

📱 OpenAI is reportedly fast-tracking its first AI phone for 2027 production, featuring advanced visual sensing hardware and dual AI processors for on-device agents.

🏦 Anthropic launched 10 AI agents for finance and insurance, designed to handle tasks like KYC screening, pitchbook creation, valuations, and earnings analysis.

🏠 California startup Span partnered with Nvidia to install wall-mounted mini AI data centers in homes and small businesses, using unused local grid capacity for distributed compute.

🏥 A Harvard study found OpenAI’s o1-preview diagnosed real ER cases more accurately than physicians, correctly identifying conditions from raw health-record text alone.

New to the newsletter?

The AI Edge keeps engineering leaders & AI enthusiasts like you on the cutting edge of AI. From machine learning to ChatGPT to generative AI and large language models, we break down the latest AI developments and how you can apply them in your work.

Thanks for reading, and see you next week! 😊

Read More in The AI Edge