Hello Engineering Leaders and AI Enthusiasts!

This newsletter brings you the latest AI updates in just 4 minutes! Dive in for a quick summary of everything important that happened in AI over the last week.

And a huge shoutout to our amazing readers. We appreciate you😊

In today’s edition:

💰 Google’s Nano Banana 2 tops image generation charts

⚡ Google’s 3.1 Flash-Lite AI boosts speed and cost

🧠 OpenAI’s GPT 5.4 model beats humans on tasks

🖥️ Alibaba’s tiny AI beats models 13× bigger

💡Knowledge Nugget: AI is a Mood, Not a Method by Rob Mealey

Let’s go!

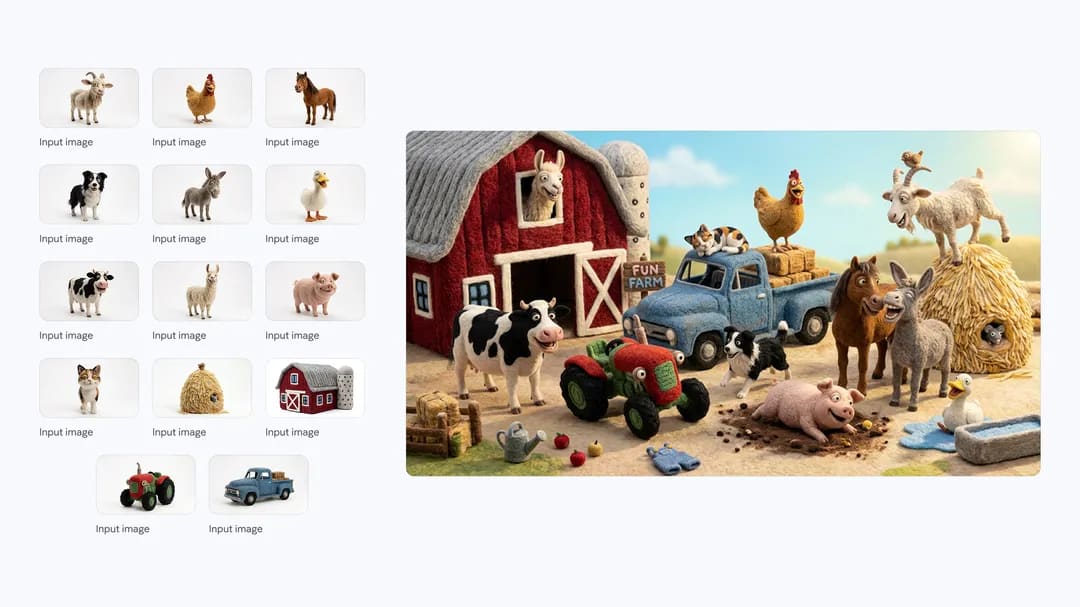

Google’s Nano Banana 2 tops image generation charts

Google has released Nano Banana 2, an upgraded version of its viral image generation model and it’s already topping the charts. The model ranked #1 for text-to-image generation on Artificial Analysis and LM Arena, beating both Nano Banana Pro and OpenAI’s GPT Image 1.5. It also landed #3 for image editing tasks, showing strong performance beyond just generating images.

The upgrade focuses on quality, speed, and cost. Nano Banana 2 can generate 4K resolution images across different aspect ratios while maintaining consistent scenes with up to five characters and fourteen objects. It also runs at Gemini Flash-level speeds and costs roughly $0.07 per image, about half the price of competing models. Google has already made it the default image generator across the Gemini ecosystem, while the Pro version remains available for paid users.

Why does it matter?

Nano Banana models have been pushing the frontier of image generation since their debut, and this latest release delivers top-tier quality at the speed and cost of a flash model. With Nano Banana 2, the long-standing tradeoff between image quality and affordability may finally be disappearing.

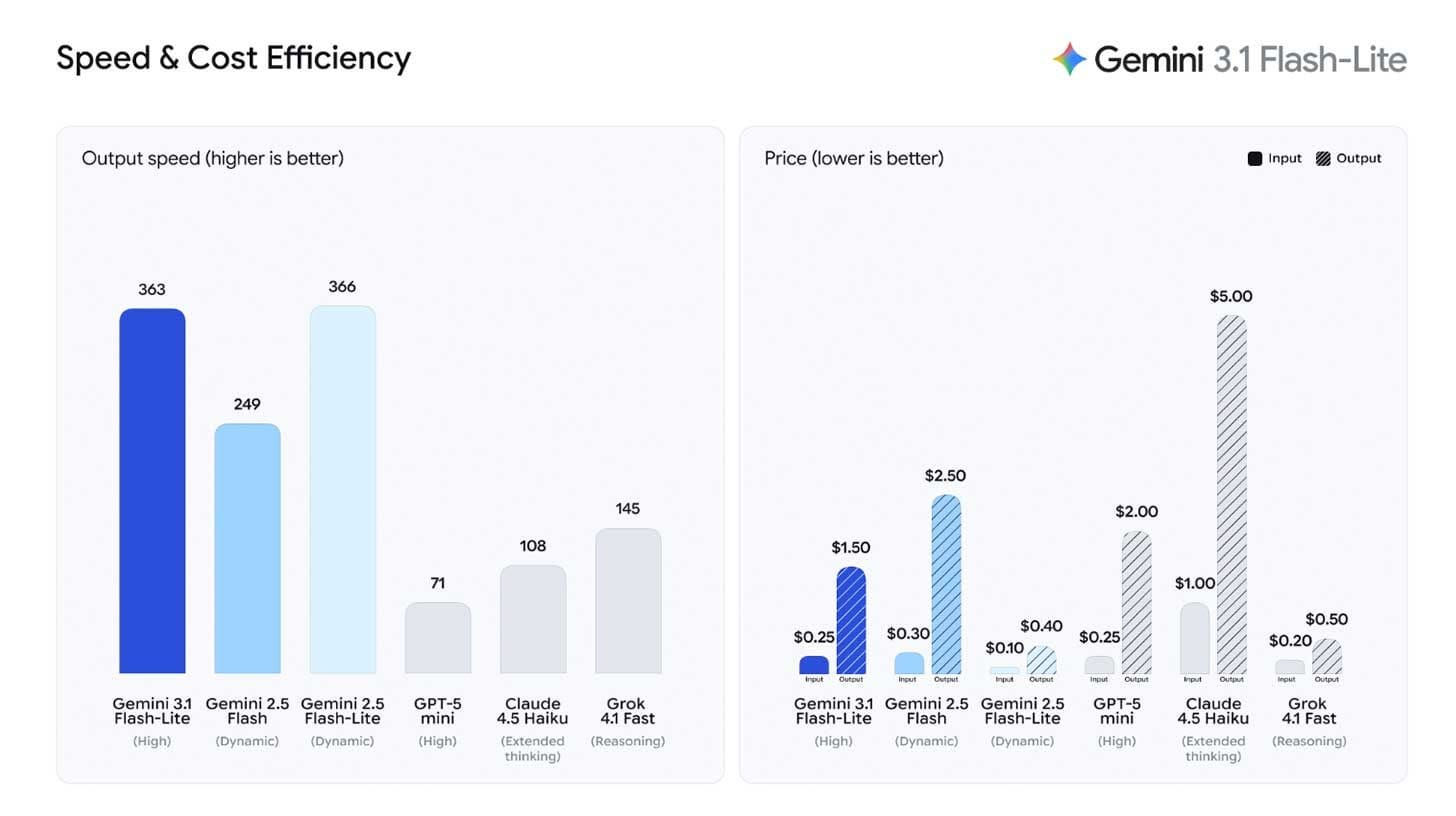

Google’s 3.1 Flash-Lite AI boosts speed and cost

Google has introduced Gemini 3.1 Flash-Lite, the fastest and most affordable model in its Gemini 3 lineup. Designed for high-volume AI workloads, Flash-Lite delivers near-instant responses while serving as a lower-cost option for tasks that don’t require a flagship model like Gemini 3.1 Pro.

Despite its lightweight positioning, the model brings notable improvements. Flash-Lite scored a 12-point jump on the Artificial Analysis Intelligence Index compared with its predecessor and even outperformed some larger previous-generation Gemini models on reasoning tasks. The model is also priced aggressively, costing roughly one-quarter of Anthropic’s Haiku and one-eighth of Gemini 3.1 Pro, positioning it as a budget option for high-volume AI workloads.

Why does it matter?

The AI race is shifting toward cheap, fast models at scale, and Flash-Lite shows Google can push prices down without losing much capability. But while Gemini 3 series continues to impress on benchmarks, its real-world traction still trails the visibility of OpenAI and Anthropic in 2026.

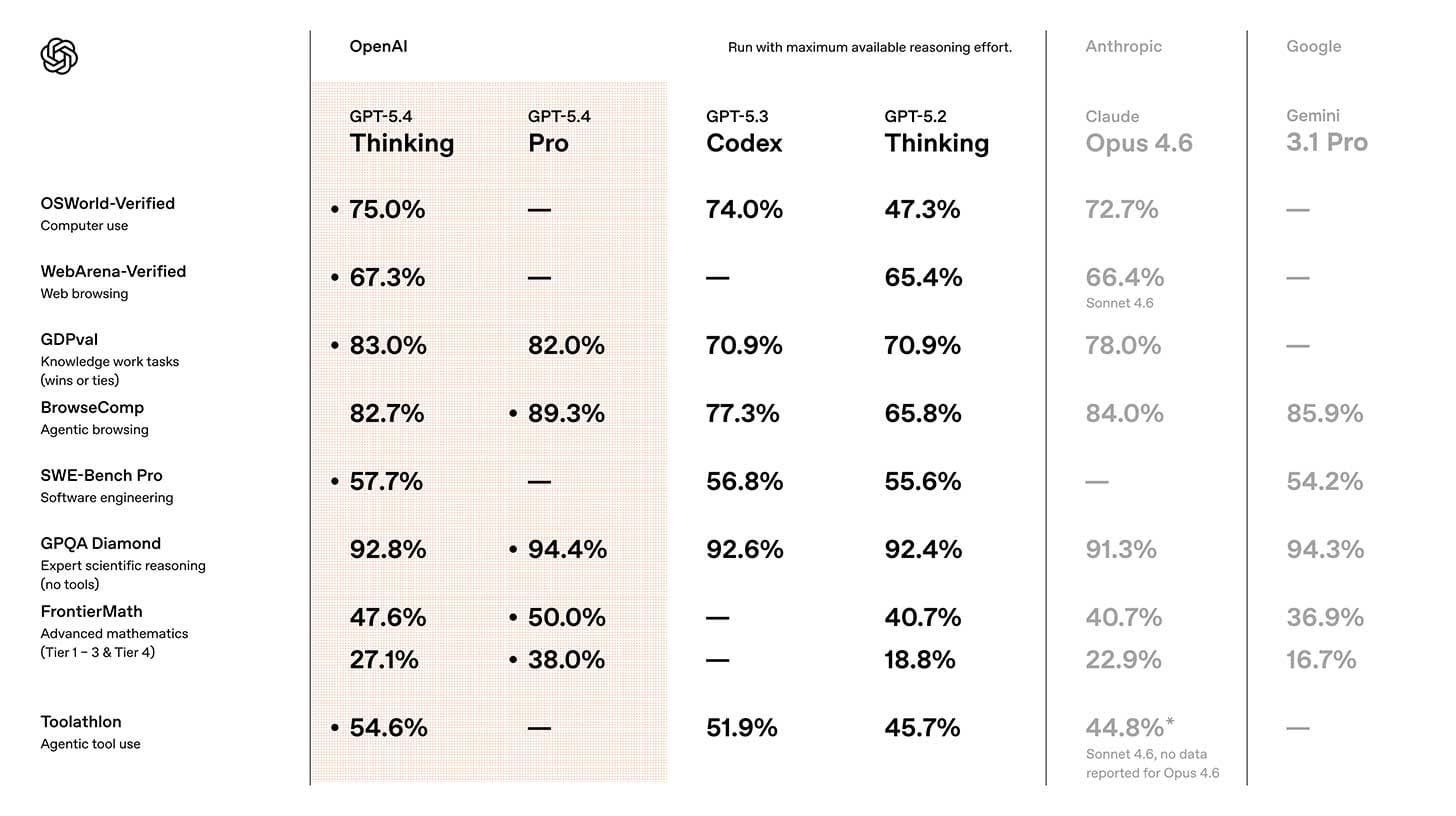

OpenAI’s GPT 5.4 model beats humans on tasks

OpenAI has introduced GPT-5.4, its latest flagship AI model with major upgrades in reasoning, coding, and real-world task execution. The model launched just days after GPT-5.3 Instant became the default chat model and is now available as GPT-5.4 Thinking for Plus, Team, and Pro users.

The model shows strong gains on real-world benchmarks. GPT-5.4 scored 75% on OSWorld-V, a test that measures how well AI navigates desktop environments, beating the human baseline of 72.4%. It also supports up to 1 million tokens of context and a new high-effort reasoning mode, allowing agents to plan and execute longer tasks. On the GDPval benchmark covering 44 knowledge jobs, the model matched or outperformed professionals 83% of the time, up from 71% in the previous version.

Why does it matter?

After a week of mixed sentiment, GPT-5.4 gives OpenAI a much-needed win, with stronger reasoning and desktop task performance pointing toward more capable AI agents. The message from OpenAI researcher Noam Brown is clear: “We see no wall.”

Alibaba’s tiny AI beats models 13× bigger

Alibaba has released Qwen3.5 Small, a new family of open-source AI models designed to run locally on laptops and even smartphones. The lineup includes four models ranging from 0.8B parameters for mobile devices to 9B for laptops, all available for commercial use under an open-source license.

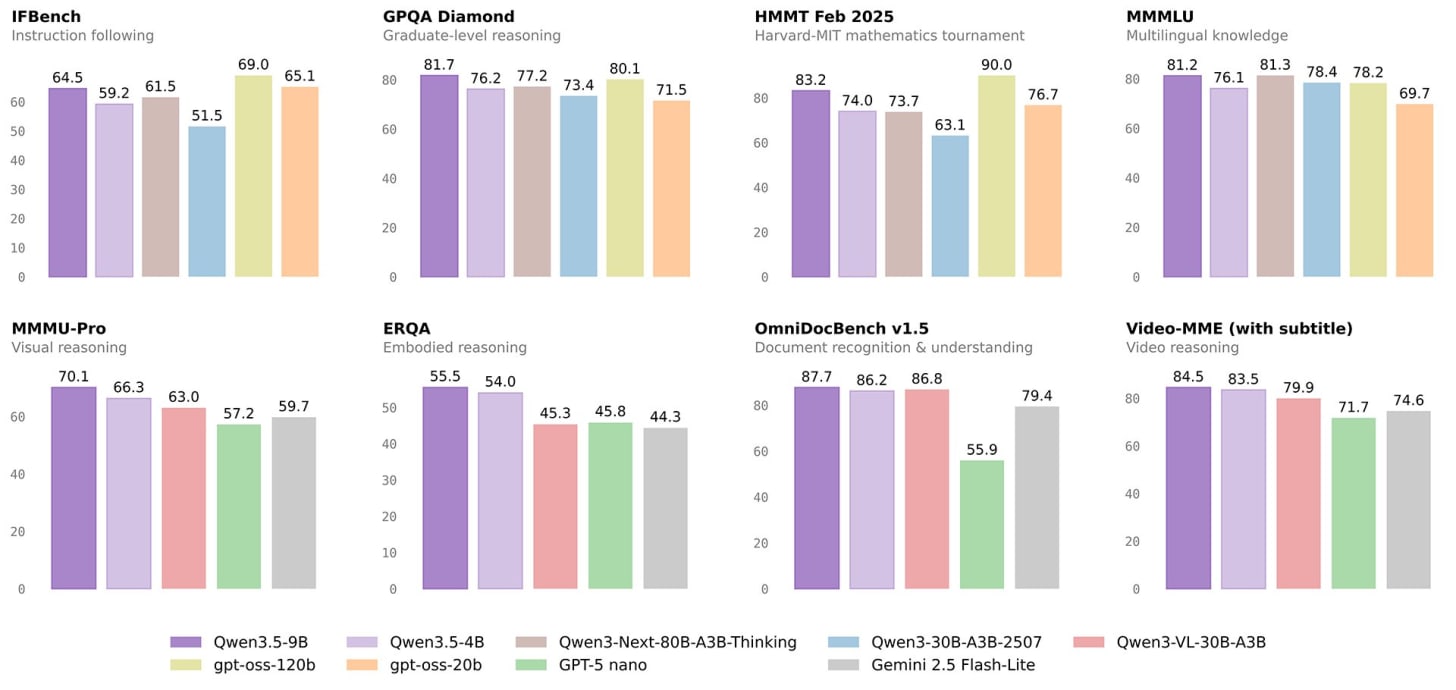

Despite their small size, the models deliver surprising performance. The 9B version outscored OpenAI’s GPT-OSS-120B, a model 13× larger on graduate-level reasoning and multilingual knowledge benchmarks. The models also support text, images, and video natively, with the 4B version matching visual benchmark scores that previously required models 20× bigger.

Why does it matter?

Frontier models may dominate benchmarks, but small models power the AI people actually use, inside apps, on laptops, and on mobile devices without cloud costs. With this release, Alibaba just pushed that practical layer of AI forward.

Enjoying the latest AI updates?

Refer your pals to subscribe to our newsletter and get exclusive access to 400+ game-changing AI tools.

When you use the referral link above or the “Share” button on any post, you’ll get the credit for any new subscribers. All you need to do is send the link via text or email or share it on social media with friends.

Knowledge Nugget: AI is a Mood, Not a Method

In this article, author Rob Mealey argues that “AI” is often treated less like a technical method and more like a cultural label. Historically, whenever a new technology appears to mimic human intelligence, it gets called AI. But once the technology becomes reliable and widely adopted, the label quietly disappears. Search algorithms, recommendation systems, and speech recognition were all once considered AI breakthroughs. Today, they’re simply treated as standard software infrastructure.

This cycle is repeating with modern AI systems. Large language models may feel like autonomous intelligence, but under the hood they’re statistical systems trained on vast amounts of human-generated data, from code repositories to forum discussions. In other words, what looks like artificial intelligence is often an aggregation of human intelligence at scale, packaged through powerful models and cloud infrastructure.

Why does it matter?

When we mistake AI for independent intelligence, we risk overestimating its capabilities and overlooking the human knowledge that powers it. Seeing AI as infrastructure for human thinking helps organizations design tools that augment decision-making instead of blindly trusting machine outputs.

What Else Is Happening❗

🔄 Anthropic launched a tool to import user preferences and context from rival chatbots into Claude with a single copy-paste, while expanding memory features to free users.

⚡OpenAI also rolled out GPT-5.3 Instant as ChatGPT’s new default model, focusing on more natural conversations while reducing hallucinations and the platform’s previously criticized tone.

🤝 Microsoft introduced Copilot Cowork for Microsoft 365, an AI feature built with Anthropic that runs multi-step tasks across apps and generates deliverables like decks and reports in the background.

📉 An Anthropic study finds AI hasn’t caused mass layoffs yet, but hiring for younger workers in highly automatable roles has dropped as tools like Claude handle more tasks.

📊 a16z’s latest Consumer AI Top 100 report shows ChatGPT still leading with 900M weekly users, while rivals like Claude and Gemini rapidly grow paid subscriptions.

💻 OpenAI is reportedly developing its own internal code repository platform to replace GitHub, potentially expanding it with Codex agents and opening it to external developers.

🔐 Anthropic revealed Claude Opus 4.6 found 22 Firefox vulnerabilities in two weeks alongside Mozilla engineers, including 14 high-severity flaws now patched for users.

🎓 OpenAI introduced a learning impact framework with Stanford and the University of Tartu to track whether ChatGPT improves student knowledge retention over time.

New to the newsletter?

The AI Edge keeps engineering leaders & AI enthusiasts like you on the cutting edge of AI. From machine learning to ChatGPT to generative AI and large language models, we break down the latest AI developments and how you can apply them in your work.

Thanks for reading, and see you next week! 😊

Read More in The AI Edge