AI Supremacy is a Newsletter about AI at the intersection of business, innovation, technology, society, and the future of civilization. Follow me as Read Futurist.

Seventy-five years ago, the idea of harnessing the power of the skies was little more than fantasy spun by futurists like Arthur C. Clarke and Isaac Asimov.

What if, we are about to see it happen in our generation.

~

Good Morning,

This piece is going to read a bit different from usual pieces, because it’s a topic I’ve been considering for some time (where I cover space-tech companies and Neo Cloud observations).

This weekend (basically the last four days) I’ve been frantically pondering how scaling AI requires not just a better semiconductor supply-chain, but a radically improved energy source and cost-efficiency structure. We have the nascent technology to manifest this already. But as a civilization are we able to do it? Who are the major players going to be?

On the topic of orbital datacenters it’s worth considering a more futuristic solution to AI Infrastructure and energy-efficiency and that to me might be trans-orbital manufacturing, Space-mining and scaling AI with energy of the Sun. It’s very science-fiction like pre 2026, but not as far off as you might think.

This is because rocket launches get cheaper and far more numerous and the energy bottleneck becomes far more intense for AI and capex in the decade ahead due to new (fairly dirty and inefficient) datacenter projects. My base case is that lunar and orbital bases will become accelerated, space manufacturing becomes normative, and new concepts around AI datacenters in space will proliferate. Even in the late 2020s.

It won’t be enough to spend a $Trillion dollar1 on capex (like they will in 2027), it will require a radically more energy and cost efficient solution to scale.

The Catalyst

When SpaceX goes public in an IPO later in 2026 (maybe as early as June), the promise of the future isn’t just about datacenters, it’s about harnessing more of the Sun’s energy to fuel AI at a sale that isn’t currently possible with the U.S. energy grid constraints, or even terrestrial datacenters.

Easily one of the most important Elon Musk interviews ever, Read the transcript here, we are going to be going over this quite a bit.

Watch it on YouTube

The Rise of BigAI

Imagine a future where OpenAI, Anthropic and SpaceX (where xAI is now merged as a subsidiary) all become key AI Cloud computing full-stack companies. The one with the most compute will have an absurd advantage over the others, if you believe scaling AI with more compute will matter.

Let me repeat, they will all become Cloud computing hyperscalers in their own right too. Orbital datacenters and future space infrastructure affords an AI Cloud on a planetary level of scale. 🌍 There is no decent concept of this in 2026, it is a pioneering landscape.

AI will press new Cloud Computing companies into existence:

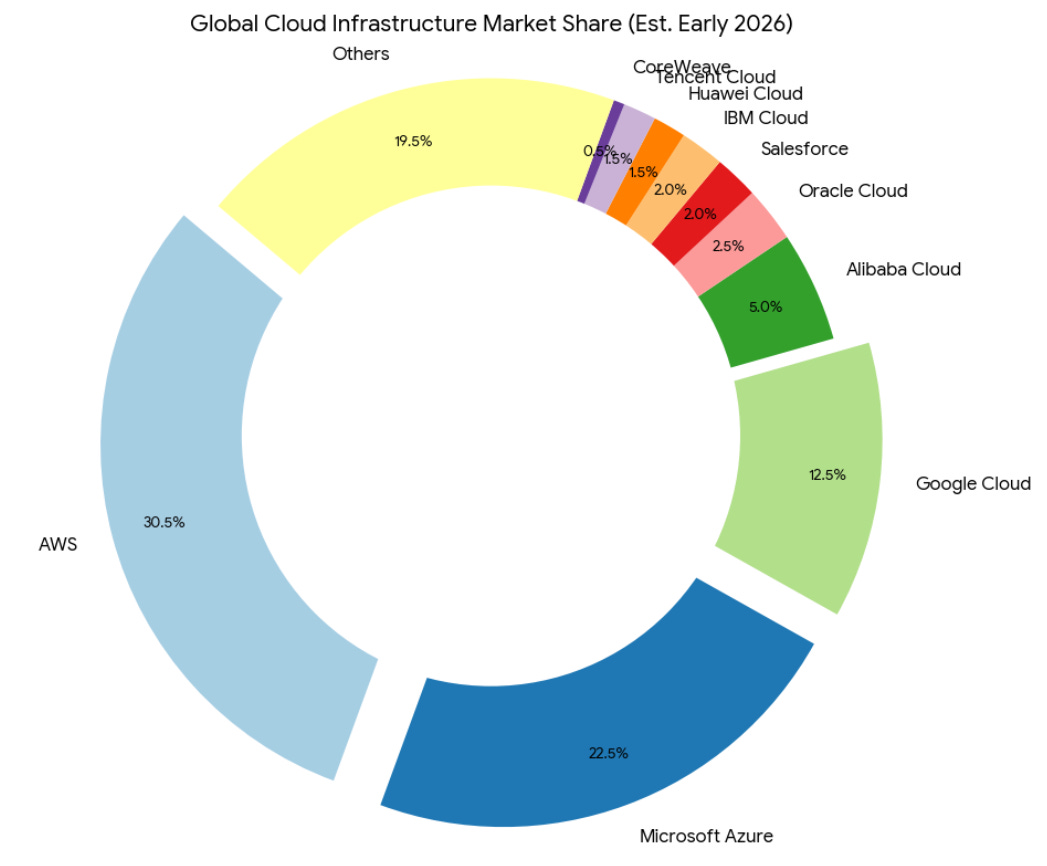

The current leaders of the Cloud (computing) are AWS, Azure, Google Cloud, Alibaba, Oracle and Huawei, among others, will soon have company. The rise of BigAI affords new entries that I believe will include possibly: SpaceX, Anthropic, OpenAI, ByteDance, CoreWeave, Crusoe, Nscale, and Nebius. That future world will have much more compute, on orders of magnitude more than today in 2026 that it is difficult to describe or fully predict or project for. Remember I’m assuming the demand for compute increases exponentially, that is my base case.

What will the AI Cloud with Orbital Datacenters even look like?

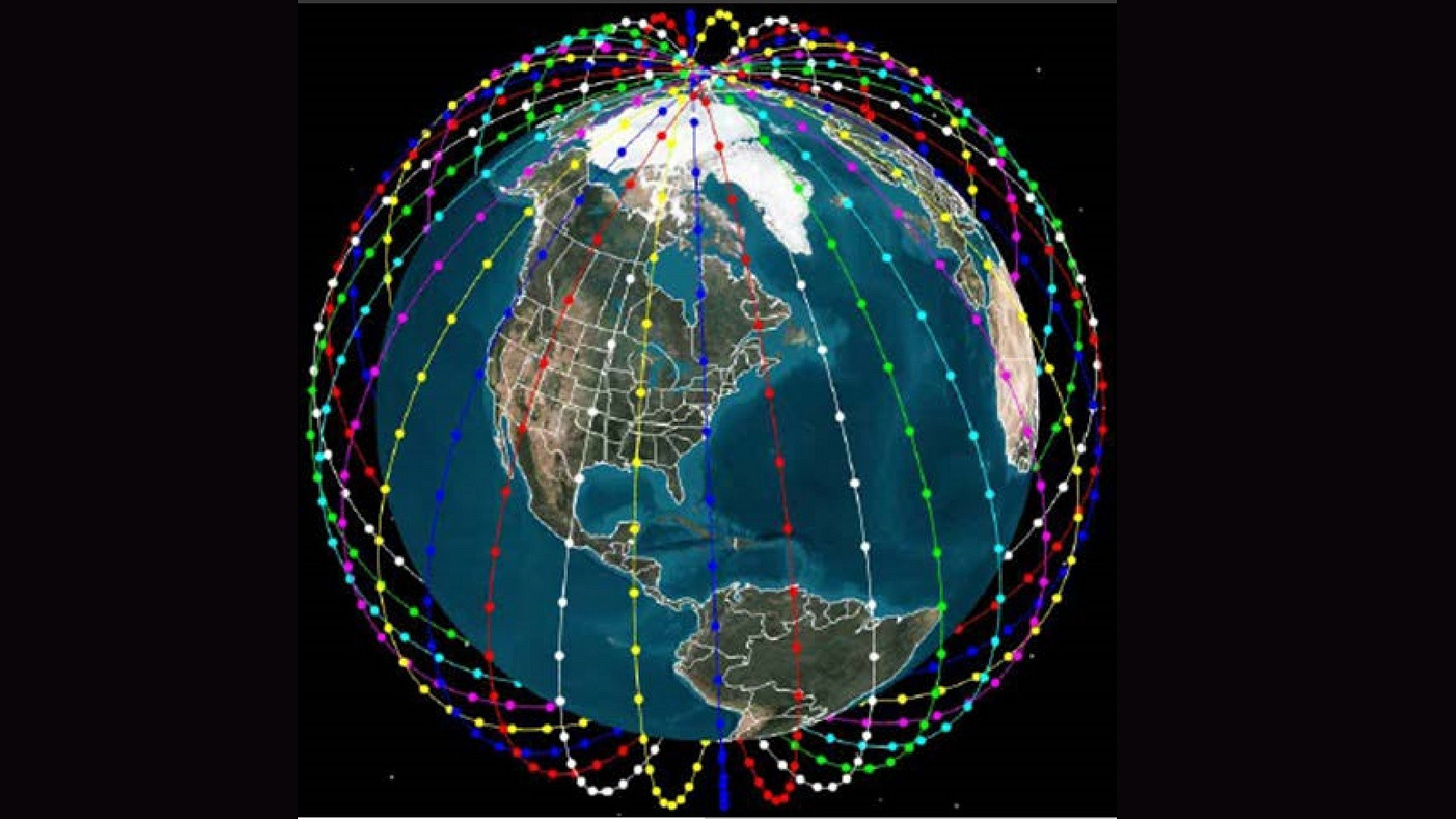

But it’s still also hard to imagine an Earth with hundreds of thousands of specialized satellites and space infrastructure centers, many acting as datacenters with solar arrays. That is because as of early 2026, according Harvard astrophysicist and space object cataloger Jonathan McDowell, there are only a paltry 14,518 active payloads in Earth orbit as of the end of January, 9,555 of them belonging to Starlink.

The Neo Clouds of the future won’t just be built in the U.S. and on Earth, but in Space. It all has to do with energy-efficient and cost per token that will be required to reduce the cost of inference due to exponentially increasing global demands for compute. There are obviously a lot of technical, engineering and maintenance problems to be solved. The problems for scaling datacenters are myriad already, including some U.S. states pausing new Datacenter construction.

Bottlenecks of Compute

There are multiple levels of bottlenecks to datacenters and scaling AI that encompassion energy, chips, talent and supply issues.

-

Energy

-

HB Memory Chips

-

TSMC like Fabs at 2nm scale and beyond

-

Cost of inefficient Terrestrial datacenters

-

U.S. power electricity grid (specifically)

-

The lack of renewable energy investment (United States, e.g. Trump Administration)

-

Cost of hardware and Fabs: The capital expenditure (Capex) for a top-tier chip fabrication plant (Fab) can now exceed $20–30 billion.

-

Regional and community opposition to datacenter projects (due to many factors including rising electricity costs at the State and National levels)

-

Permits, delays and Substation bottleneck: for example, in major hubs like Northern Virginia and Phoenix, the wait time for a new high-voltage utility hookup has stretched to 5–7 years.

-

Skilled labor shortages for Datacenter buildouts: e.g., a shortage of M&E (Mechanical & Electrical) specialists.

-

Semiconductor talent and experience shortage (United States)

-

GPU production supply (.e.g Nvidia, AMD, Google ASICS, etc…)

-

Capex and costs of datacenters and GPUs as a whole at scale (debt financing, corporate bonds, shareholder backlash)

-

Energy inefficiency in general of terrestrial datacenters (e.g. cooling in warmer climates, water, day-night cycle, sovereign and geopolitical conflicts)

How to meet growing demand for compute?

Problem: To scale AI fully will require more energy faster than is possible in legacy datacenters.

If Elon Musk and others who agree with him are right, the way we both power and build and scale compute will be very different in the 2030s and 2040s.

Experts and analysts are however skeptical of Musk’s timeline.

Prof Julie McCann and Prof Matthew Santer, the co-directors of the school of convergence science in space, security and telecoms at Imperial College London, say solar-powered datacentres could be a future option for AI companies. – The Guardian

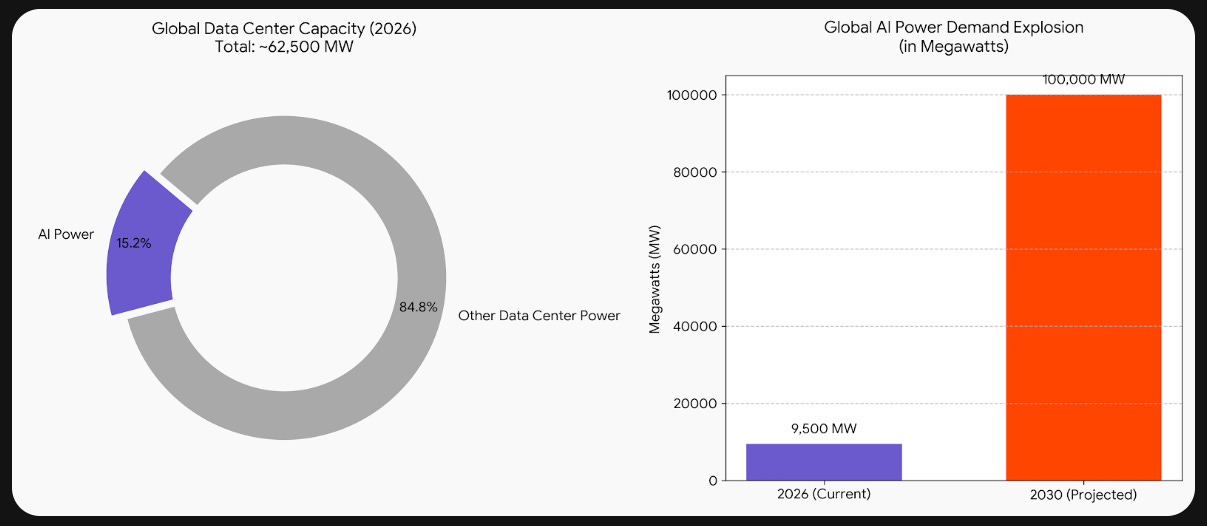

Current Levels

-

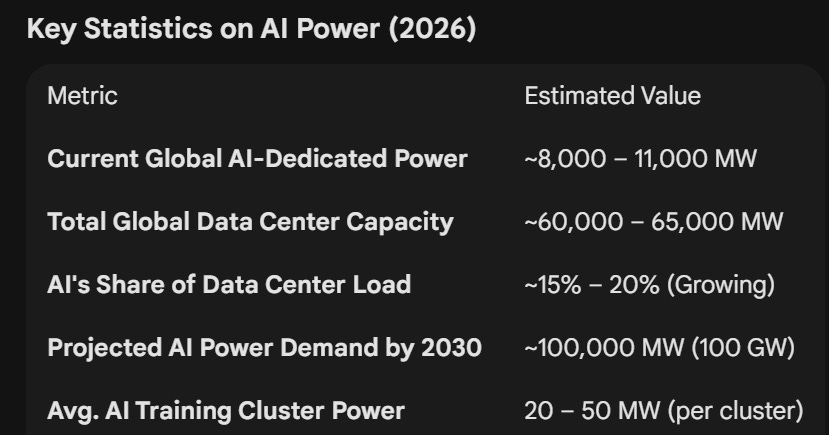

Global data center capacity is currently sitting at approximately 110–120 GW.

-

For context, the industry is on a trajectory to reach 200 GW by 2030.

-

In 2026 alone, developers are expected to spend over $280 billion just on the physical facility shells, power systems, and cooling infrastructure, roughly $700 billion in AI related capex just from Hyperscalers including Oracle and CoreWeave.

-

This is before China has decided to scale up capacity at similar levels to the U.S.

-

Training vs. Inference: In 2026, for the first time, AI inference (running models for users) is starting to rival AI training in total energy share.

-

The 10x Growth Curve: The supply of power specifically “spoken for” by AI is expected to skyrocket from ~9,500 MW today to over 100,000 MW (100 GW) by 2030.

-

As of early 2026, the global “supply” of power dedicated to AI is roughly 8,000 to 11,000 Megawatts (8–11 GW).

Now imagine a world that needs 100x or 1000x that, how would that be achieved? Think of this way too for an idea of how to visualize it for context: 1 MW: Can power roughly 750 to 1,000 homes.

Even the largest AI datacenter clusters won’t be sufficient to fulfil the likely future and imminent demand. They will also be expensive to build, maintain and to replace the expensive GPUs with frontier ones. Namely, powering them won’t make sense and Nuclear is way too slow to build and scale. The batteries needed and the day/night schedule inefficiency and power layers required for things like cooling and seasonal variations just don’t make sense at scale. It’s just massively inefficient.

SpaceX Needs to go public to Raise More Funds

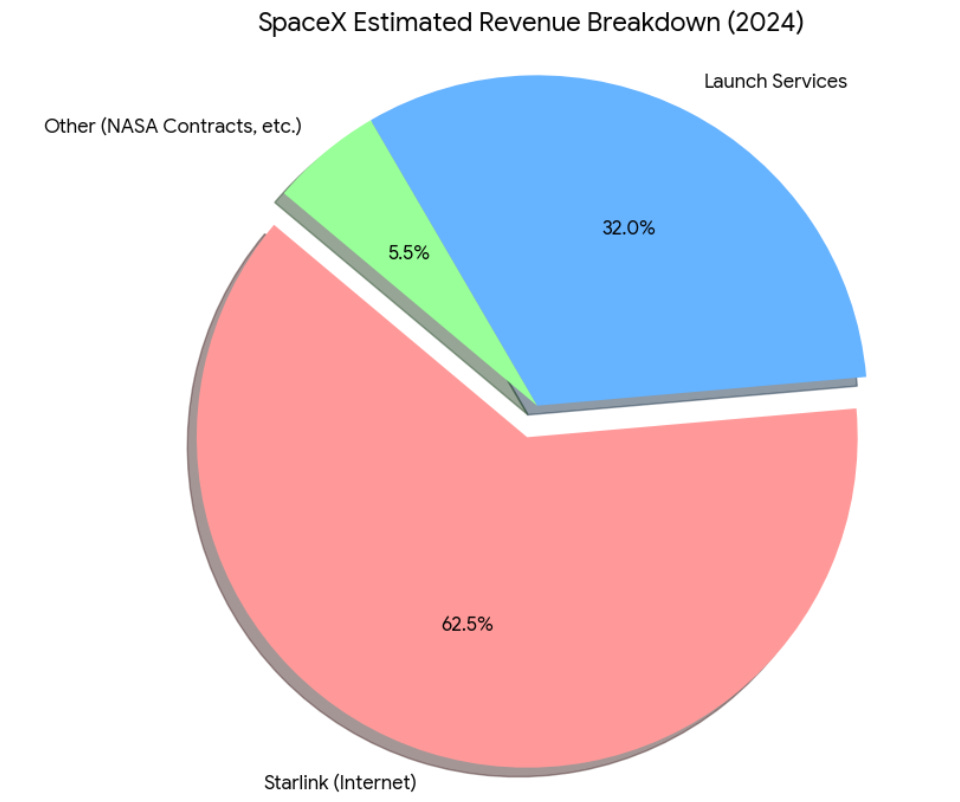

SpaceX will soon have a lot more competition for launch services from Blue Origin, Rocket Lab, Firefly Aerospace and others. Meanwhile Starlink has become the cash-cow for SpaceX and can help fund xAI’s extreme cash burn.

It’s not just SpaceX though, there are many companies investing into this possibility of orbital datacenters and solar capture in space at scale. I estimate the SpaceX IPO could raise around $45 to $55 Bn.

Blue Origin has AWS Synergy

Blue Origin are the leaders in AI Infrastructure plans that nobody is talking about, they also for obvious reasons have AWS synergy. They are building a “seamless sky-to-ground” cloud. While SpaceX has to build its software stack from scratch, Blue Origin is integrating AWS Snowcone devices and Greengrass software directly into their architecture. As you might already know in January 2026, Blue Origin announced TeraWave, a 5,400-satellite network specifically for “data centers and government.” Unlike Starlink (which is for everyone), TeraWave is a specialized, high-bandwidth “interstate highway” for massive data movement. Deployment starts Q4 2027.

Blue Origin I predict will go IPO in 2027 or roughly six to eighteen months after SpaceX. There are also some obvious reasons why Blue Origin might compete with SpaceX favorably here: namely the New Glenn rocket, which successfully aced its initial 2025/2026 launches, has a much larger fairing (7 meters) than Falcon 9. This allows Blue Origin to launch larger, more cooling-intensive server modules that SpaceX might struggle to fit in a standard Starlink chassis.

I believe Blue Origin have been thinking about orbital datacenters and doing the R&D for space infrastructure for years and as leaders of the world to solve those specific problems with the best minds in the world. You can still argue Blue Origin is fairly far behind for the cadence of SpaceX’s first mover advantage.

SpaceX Cadence and Flight Volume

I expect at first the three main players to be SpaceX, Blue Origin and China. Although there are some other interesting ones.

SpaceX has a “launch, fail, learn” speed that Blue Origin hasn’t matched. SpaceX is already filing FCC paperwork for “millions” of satellites designed to host AI compute, essentially turning the Starlink shell into a global supercomputer. In the interview, Musk makes some fairly ambitious claims of launch volume that could be required to meet his targets. It doesn’t make a whole lot of sense.

StarCloud Accelerates Plans

Days after SpaceX filed its plan for a constellation of one million satellites with the FCC, Starcloud is looking to build its own 88,000-satellite constellation for AI data centers. I predict Alphabet acquires StarCloud in 2028 or 2029.

Founded in 2024, Starcloud (formerly known as Lumen Orbit) is a pioneering aerospace and infrastructure startup focused on building large-scale AI data centers in space. Headquartered in Redmond, Washington, the company’s core mission is to solve the terrestrial “power crisis” facing the AI industry.

Starcloud talks up the benefits of space-based data centers in its FCC submission. Their plans are fairly aggressive.

China joins race to develop space-based data centers with 5-year plan

The state-run China Global Television Network (CGTN) reported on Thursday (Jan. 29) that the main Chinese space company, the state-owned China Aerospace Science and Technology Corporation (CASC), will work on space-based data centers as a part of a larger five-year plan to expand the nation’s already significant presence in space.

The five-year plan also includes efforts to expand resource development, like asteroid mining. Furthermore, the plan also cites space debris monitoring and an expansion into space tourism. The rocket company that I’m watching the most in China is called Galactic Energy. Galactic Energy (Beijing Xinghe Dongli Space Technology Co., Ltd.) is a leading private Chinese aerospace company. Founded in February 2018, it has quickly become one of the most successful commercial launch providers in China. If you know anything about China, huge infrastructure projects is sort of their specialty. That won’t be any different in space or in lunar projects.

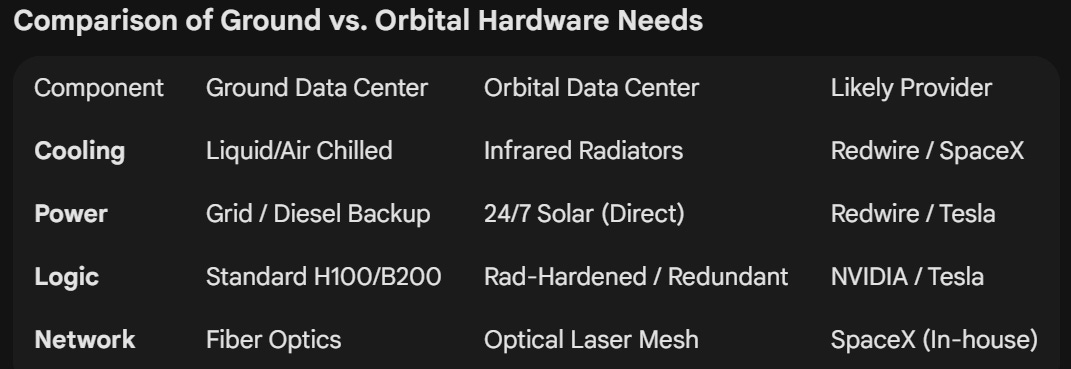

Why Redwire Might Matter ☀️

There are many companies who benefit if the Space AI race becomes a real thing. Redwire that went public rather recently is involved in various aspects of Space infrastructure. It’s a leading contender as a company to builds the power and cooling infrastructure that makes those servers possible. Redwire’s signature technology is the industry standard for high-density power ROSA (Roll-Out Solar Arrays). Redwire’s stock ticker is , a company with a market cap of just $1.66 Billion today. It’s one of my picks for a “picks and shovels” company of the future in Space. Therefore I think it will win a lot of Defense contracts, because it’s too important.

As you might know, they provided the arrays for the International Space Station and have recently signed deals with Axiom Space and Blue Origin. In my view, if (and as) orbital datacenters scale to “petaflop-class” constellations, they will require solar wings larger and more efficient than anything currently flying. Redwire’s flight heritage here makes them the “default” choice for any datacenter startup.

I don’t agree with many of Elon Musk’s ideas and various promises he has historically made (many of which didn’t see fruition in his timeline and debatable if they ever did), but his belief in Solar power is one point where I’m in full alignment and agreement with him.

While Musk is not the most eloquent (or clear) speaker, let’s try to parse some of the most consequence la statements he made on this:

What Elon Musk is Saying

1

“The availability of energy is the issue. If you look at electrical output outside of China, everywhere outside of China, it’s more or less flat.” – Elon Musk quotes

Again I invite you to watch the Orbita datacenter segment2 of the interview and view the transcript here.

2

The energy bottleneck is removed in space, making scaling have a lot less friction in time and delays.

“It’s harder to scale on the ground than it is to scale in space. You’re also going to get about five times the effectiveness of solar panels in space versus the ground, and you don’t need batteries.” – Elon Musk Quotes

3

HBM shortages and other hardware supply-chain problems are causing huge delays already in datacenter projects.

“Those who have lived in software land don’t realize they’re about to have a hard lesson in hardware. It’s actually very difficult to build power plants. You don’t just need power plants, you need all of the electrical equipment. You need the electrical transformers to run the AI transformers.” – Elon Musk Quotes

4

Elon clearly believes in Solar Energy as the backbone of scaling AI compute.

“Both SpaceX and Tesla are building towards 100 gigawatts a year of solar cell production.” – Elon Musk

5

Elon Musk is insinuating that China knows their investment in renewable energy like Solar has a future in space.

“Solar cells are already very cheap. They’re farcically cheap. I think solar cells in China are around $0.25-30/watt or something like that. It’s absurdly cheap. Now put it in space, and it’s five times cheaper. In fact, it’s not five times cheaper, it’s 10 times cheaper because you don’t need any batteries.” – Elon musk

6

“So the moment your cost of access to space becomes low, by far the cheapest and most scalable way to generate tokens is space. It’s not even close. It’ll be an order of magnitude easier to scale.” – Elon Musk

7

Elon Musk believes that orbital datacenters removes many of the barriers to speed up the scaling of AI.

“The point is you won’t be able to scale on the ground. You just won’t. People are going to hit the wall big time on power generation. They already are. The number of miracles in series that the xAI team had to accomplish in order to get a gigawatt of power online was crazy.” – Elon Musk

8

Elon Musk made several insinuations that the hardware, permits and energy requirements are much more intensive than most people think.

“What you probably need at the generation level to service 330,000 GB 300s—including all of the associated support networking and everything else, and the peak cooling, and to have some power margin reserve—is roughly a gigawatt.” – Elon Musk

9

Elon Musk made several comments about the bottlenecks of the hardware components of Natural gas (e.g. turbines). The gas turbine backlog and blades problem is already severe.

“As I said, we are scaling solar production. There’s a rate at which you can scale physical production of solar cells. We’re going as fast as possible in scaling domestic production.” – Elon Mysk

10

Elon Musk envisions a lot of energy generation and a lot of launches to build the orbital energy-grid he’s proposing SpaceX will lead.

“If you say five years from now, I think probably AI in space will be launching every year the sum total of all AI on Earth. Meaning, five years from now, my prediction is we will launch and be operating every year more AI in space than the cumulative total on Earth.”

11

The number of launches this will require from SpaceX and how to pay for it remains a bit concerning in some of Elon’s forward statements:

“SpaceX is gearing up to do 10,000 launches a year, and maybe even 20 or 30,000 launches a year.” – Elon Musk

Elon sees SpaceX become a Hyperscalers (Cloud computing provider) of planetary scale.

12

DP: So, SpaceX is going to become a hyperscaler?

“Hyper-hyper. If some of my predictions come true, SpaceX will launch more AI than the cumulative amount on Earth of everything else combined.”

About the advantages of taking SpaceX public:

13

“There’s obviously a lot more capital available in the public markets than private. It might be 100x more capital, but it’s way more than 10x.” – Elon Musk,

There were a lot of interesting quotes in the interview and you have to take it in a context of this is a company that might go public in as few as four months.

14

“Speed is important. I’m generally going to do the thing that… I just repeatedly tackle the limiting factor. Whatever the limiting factor is on speed, I’m going to tackle that. If capital is the limiting factor, then I’ll solve for capital. If it’s not the limiting factor, I’ll solve for something else.” – Elon Musk

15

There are a bunch of temporary and intermittent Energy technologies, but true scale is much easier with Solar according to Musk.

“The way you think about scaling long-term is that Earth only receives about half a billionth of the Sun’s energy. The Sun is essentially all the energy. This is a very important point to appreciate because sometimes people will talk about modular nuclear reactors or various fusion on Earth.” – Elon Musk

16

The scale and speed of SpaceX’s plans don’t make much sense from any realist’s perspective. But it was entertaining hearing Elon Musk consider his timeline.

“To reach a large volume in, say, 36 months, to match the rocket payload to orbit… If we’re doing a million tons to orbit in, let’s say three or four years from now, something like that… We’re doing 100 kilowatts per ton. So that means we need at least 100 gigawatts per year of solar. We’ll need an equivalent amount of chips. You need 100 gigawatts worth of chips. You’ve got to match these things: the mass to orbit, the power generation, and the chips.” – Elon Musk

17

Elon Musk clearly is dead-set on making SpaceX (that will include Tesla eventually) an Energy, Cloud, AI and compute powerhouse. Building their own Fabs is part of that self-sufficiency. He believes this can basically solve the Energy and compute bottleneck entirely. I’m just not sure how he pays for the highly ambitious plan.

“Again, bearing in mind that average power usage in the US is 500 gigawatts. So if you’re launching, say 200 gigawatts, a year to space, you’re sort of lapping the US every two and a half years. All US electricity production, this is a very huge amount.” – Elon Musk

There’s going to be a huge emphasis on Lunar Infrastructure development as both SpaceX and Blue Origin and the U.S. Genesis Mission is pivoting in that direction hoping to beat China to these objectives.

18

“Well, the lunar soil is 20% silicon or something like that. So you can mine the silicon on the moon, refine it, and create the solar cells and the radiators on the moon. You make the radiators out of aluminum. So there’s plenty of silicon and aluminum on the moon to make the cells and the radiators. (next paragraph) The chips you could send from Earth because they’re pretty light. Maybe at some point you make them on the moon, too.”- Elon Musk

19

I really found the interview fairly fruitful for understanding Elon Musk’s conception and expectations of AI better and his vision of xAI (and Tesla) merging into SpaceX. He’s obviously going to do his best with solar and Satellites and his company is going to contribute to Lunar infrastructure and potentially Martian colonization.

Even if others simply follow his lead to orbital datacenters with solar arrays it means less datacenter will be needed to be built on Earth (which ultimately is likely a good thing). Or his concept of orbital datacenters could prove to be too expensive to maintain and build. We’ll see soon enough.

SpaceX is hoping to be the biggest IPO in history by 2x:

“Reality is the best verifier.”

China is also building Deep Sea Datacenters

The only country in the world experimenting seriously with AI Infra in the ocean is China. There have been several experimental underwater projects — like Microsoft’s Project Natick, which concluded in 2020 — but currently, the only operational, commercial underwater data center in the world is in Hainan Province in China.

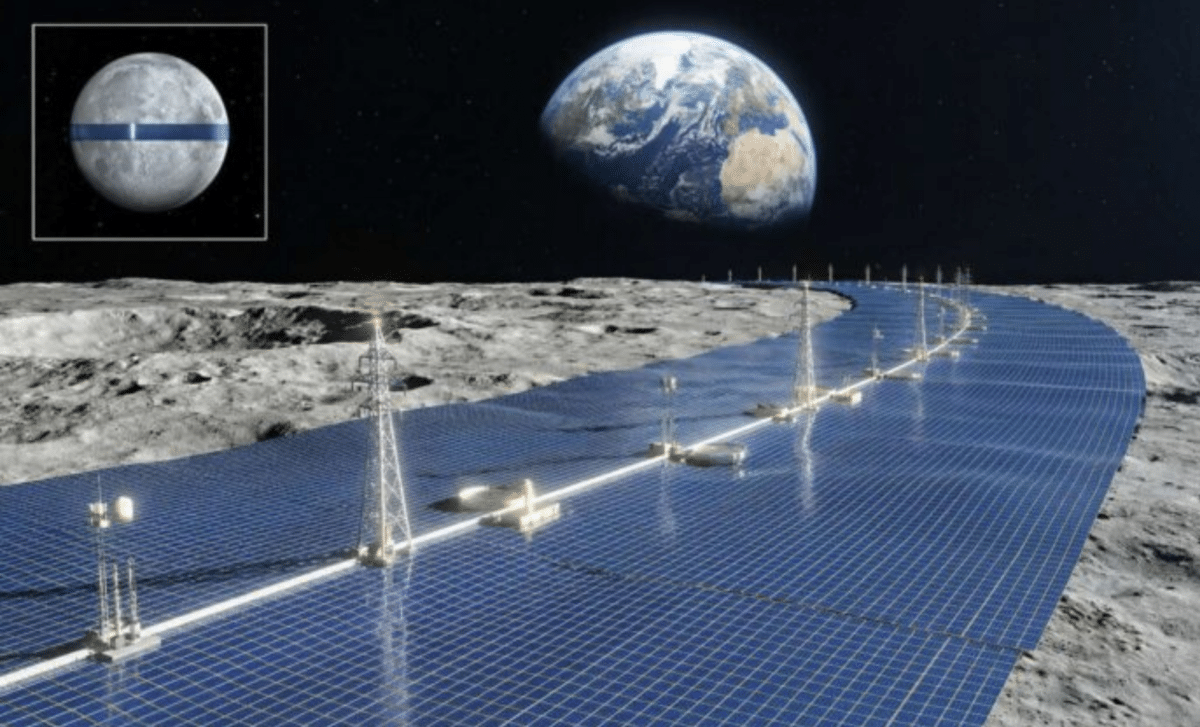

Japan Plans to Create a Solar Ring Around the Moon to Power Earth

If orbital datacenters around the Earth aren’t practical, lunar Infrastructure is going to become a big deal in the 2030s. There are many startups already thinking about this.

Shimizu Corporation’s Luna Ring concept could transform global energy by harnessing the Moon’s solar power and beaming it back to Earth. The U.S. want to beat China to lunar bases and infrastructure. One of the real reasons they want to increase national defense spending so much in 2027.

According Shimizu Corporation, this idea could transform the way humanity generates and consumes energy. Known as the Luna Ring, this concept envisions creating a 250-mile-wide ring of solar panels around the Moon’s equator to capture solar energy and beam it back to Earth using microwave and laser technology.

Musk stated in February 2026 that a “self-growing city” on the Moon could be achieved in less than 10 years, while Mars would likely take 20+ years.

The race to the Moon of the 2030 variety has as much to do with space manufacturing, the future of energy and AI as National security. Space-mining and cheaper space missions to Mars are believed to be far easier from the Moon. Likely it’s the same for solar arrays at scale in space.

While SpaceX will try its best to do everything in-house and even have Tesla build their own Fabs. They might have to outsource some things at the beginning. There will be many important players in the Space Infra era.

Companies Involved in Orbital Datacenters

Think about aspects of all kinds of hardware SpaceX would need. Think about pioneering space-tech companies and future synergies for how this all develops. Who would SpaceX, Blue Origin, Starcloud and others likely partner with on the hardware side?

If you are an investor, I want you to open your mind.

In invite you to do your due diligence on the following:

-

Blue Origin

-

SpaceX

-

Nvidia (and other chip makers)

-

SK Hynix

-

Starcloud

-

Redwire

-

Lonestar Data Holdings

-

Interlune (Energy, Helium-3)

-

Galactic Energy

-

Relativity Space

-

Northrop Grumman

-

Rocket Lab

-

ADAspace

-

Xingyi Xineng

-

Aetherflux

-

Planet Labs

-

AI Server companies (e.g. Hewlett Packard Enterprise)

-

Skyloom

-

Varda Space Industries

-

Crusoe (via partnerships eg. with Axiom)

-

SpaceBilt

-

Salutes Space

-

Honeywell

-

Tesla

-

Microchip Technology

-

BAE Systems

-

Mynaric

-

Zhejiang Lab (ZJ Lab)

-

Rendezvous Robotics

-

Dcubed

-

Junda ((Hainan Drinda))

-

Jinko

-

Maxwell Technologies

-

Corning

-

Amazon (Kuiper)

-

Shimizu Corporation (Japan)

Google Project Suncatcher ⋆☀︎。

While Google is thinking about this with Project Suncatcher. The problem is they are thinking about it like a Moonshot and not a race. Announced in late 2025, this project focuses on solar-powered satellite computing platforms.

As of February 2026, the prospect of orbital data centers has moved from “science fiction” to a legitimate, albeit high-risk, infrastructure race. SpaceX is targeting 100 GW of orbital AI compute, powered by near-constant solar energy and launched via Starship.

If they want to be competitive, OpenAI, Meta, Microsoft and Alibaba will have to think about this more seriously in the 2020s. Alphabet is all but assured to be a competitor as is Amazon with Blue Origin. Some won’t be able to follow. OpenAI has already over-invested in its Stargate infrastructure plans.

Project Suncatcher envisions a distributed network of smaller components: Here is a bit about the engineering and physics of it in case you are confused:

-

Solar-Powered Satellites: Constellations of satellites equipped with high-efficiency solar panels. Because there is no atmosphere or night cycle in certain “dawn-dusk” orbits, these panels can generate up to 8x more power than those on Earth.

+1

-

Onboard TPUs: The satellites carry Google’s custom Tensor Processing Units (TPUs)—the same chips used to train and run models like Gemini—allowing AI tasks to be processed directly in orbit.

-

Space Lasers (Optical Links): To act as a single “orbital brain,” the satellites communicate with each other using free-space optical links (lasers) capable of transferring terabits of data per second.

-

Edge Computing in Space: By processing data (like satellite imagery) in orbit, only the final “insights” need to be sent back to Earth, drastically reducing the bandwidth required for downlinking.

Google won’t be moving fast here: Google plans to launch two prototype satellites equipped with custom AI chips (likely TPUs) by early 2027.

Logos Space Services 🛰️

There will also be many pure-play newcomers. Aspiring Starlink competitor Logos Space Services has secured FCC clearance to launch more than 4,000 broadband satellites into low Earth orbit by 2035. This is another company with ties to Alphabet. The company is headed by its founder, Milo Medin, a former project manager at NASA as well as a former vice president of wireless services at Google.

I see this as a competitor to Blue Origin’s TeraWave or Amazon’s own Amazon Leo project. The company has been raising money since it opened its doors in 2023 and reportedly hopes to deploy its first satellite by 2027. Logos’ planned low Earth orbit constellation would beam high-speed broadband internet to customers worldwide, with also seemingly a focus on including government and enterprise users.

“Launching a constellation of a million satellites that operate as orbital data centers is a first step towards becoming a Kardashev II-level civilization” – Elon Musk – source.

Risks of Having too Many Earth Orbit Satellites

Due to the risks, companies like SpaceX and Blue Origins are shirting their priorities to lunar development which in my view is going to skyrocket in the 2030s and 2040s. The proposed system would place computing-enabled satellites across multiple orbital shells, operating at altitudes ranging from 500 kilometers to 2,000 kilometers above Earth.

As of 2026, the sheer number of satellites in Low Earth Orbit (LEO) has transformed from a scientific achievement into a complex traffic management challenge. The idea that we can afford to have hundreds of thousands more will be challenging.

According to The Register, increasing the number of active satellites by nearly 6,800 percent and the risk of Kessler Syndrome – a situation where Earth orbit becomes so crowded a chain reaction of collisions creates so much space debris that portions of Earth orbit become unusable – starts to look quaint.

SpaceX’s application [PDF] to the FCC calls for its datacenter constellation to be in multiple orbital shells in altitudes between 500 km (310 miles) and 2,000 km (1,242 miles).

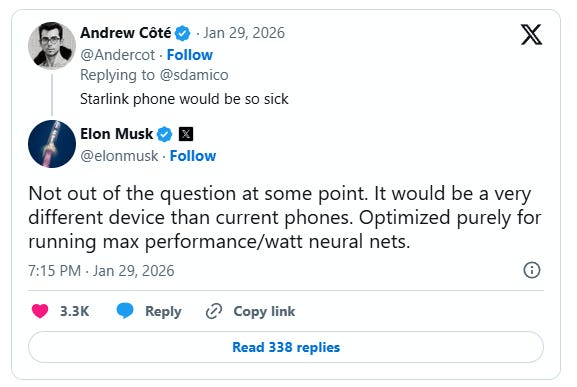

A Starlink Mobile Phone?

SpaceX is reportedly exploring new products tied to Starlink, including a potential Starlink-branded phone. SpaceX has reportedly discussed building a mobile device designed to connect directly to the Starlink satellite constellation. Elon Musk explicitly denied this on X, stating “We are not developing a phone” in response to those reports.

The sources of Reuters however seemed reliable. SpaceX frankly needs this direct connection (of a personal hardware device) to scale xAI more reliably. Apple and OpenAI are building AI wearable devices already and Smart glasses by Apple, Google, Meta and Chinese firms are already going to go live in 2027. Finally, Musk did note in late January 2026 that a mobile device isn’t “out of the question at some point,” but described any such thing as very different from current phones.

Conclusion

Scaling AI will require a lot more energy than the Earth’s energy grid can provide and will take decades to see full fruition. SpaceX and others will make space-technology more investable and closer to a reality of how civilization views its goals. Scaling AI will also be required to manage capacity of the spiraling and exponentially increasing demand for compute.

We also have to remember Elon Musk and Jeff Bezos with SpaceX and Blue Origin respectively have their long-term pet visions. Mars for Elon Musk and O’Neill cylinders for Jeff Bezos.

If hardware and energy are the determining factors in AI’s improvements, the way we scale up the future of energy and the semiconductor industry needs to change. How effective robotics becomes to help us build into space may depend on AI that depends on how well we are able to harness more energy.

Scaling AI is an energy problem, and the future depends on us engineering Space infrastructure. Building more datacenters that erode communities and their resources is not a sustainable scalable long-term solution.

TL;DR Glossary

Did you find this all a bit overwhelming? Read these easy to read bullet points. Thinking about this in bite-sized nuggets. ⚗️ Sounds sci-fi, I know, I know.

-

Orbital Data Centers: SpaceX intends to move massive AI data centers into orbit within 30 to 36 months because space is an order of magnitude easier to scale for power than Earth.

-

Energy Arbitrage: Space-based solar is viewed as 10 times cheaper than terrestrial solar because it lacks atmosphere, clouds, and the need for expensive battery storage.

-

SpaceX-xAI Merger: The interview highlights the recent merger of SpaceX and xAI, creating a vertically integrated entity that controls both the launch capability and the AI hardware.

-

Starship as a Utility: The plan relies on Starship reaching a launch cadence of roughly once per hour to deploy the 100 gigawatts of compute Musk envisions in orbit.

-

Regulatory Bypass: Moving to space is a strategic “regulatory play” to bypass the years-long permitting delays for power plants and transmission lines on Earth.

-

Mars & Consciousness: Musk reaffirms that SpaceX’s fundamental goal remains making life multi-planetary to preserve the “light of consciousness” against terrestrial risks.

-

Supply Chain Dominance: SpaceX and Tesla have a new mandate to reach 100 gigawatts of annual solar production to support this massive expansion into orbital infrastructure.

-

Lunar Mass Drivers: The long-term tech tree includes using lunar materials and mass drivers to build even larger structures in space more efficiently than launching from Earth.

-

SpaceX is gearing up for an extreme launch cadence of 10,000 Starship launches per year, potentially scaling to 20,000–30,000, to deploy massive orbital AI data centers.

-

Within about five years, SpaceX expects to launch and operate more AI compute capacity in space annually (hundreds of gigawatts) than the entire cumulative total on Earth.

-

Achieving 100 gigawatts of orbital AI would require roughly 10,000 Starship launches per year, equating to about one launch every hour.

-

Starship could achieve this extreme cadence with as few as 20–30 vehicles if rapid reusability allows turnaround in around 30 hours.

-

SpaceX aims to reach a million tons to orbit per year within 3–4 years to support massive solar arrays and chips for orbital AI at ~100 kW per ton.

-

The company is building toward 100 gigawatts per year of solar cell production (in collaboration with Tesla) from raw materials to finished cells to power orbital infrastructure.

-

The biggest remaining technical bottleneck for Starship is a fully reusable heat shield that survives reentry without tile loss or major inspections, enabling rapid reflights.

-

Starship Version 3 is designed for full reusability (unlike Falcon’s partial), making it the most complex machine ever built and key to multi-planet civilization.

-

Long-term, SpaceX envisions a lunar mass driver using Moon resources (like silicon from lunar soil) to scale to petawatt-level AI launches per year.

-

The ultimate SpaceX mission ties to Mars colonization by propagating intelligence (human and AI) off Earth to ensure survival, even if humanity on Earth faces catastrophe.

-

The switch from carbon fiber to steel for Starship was critical to accelerate progress and timelines toward Mars, as steel proved cheaper and faster to produce at scale.

I’m hoping this document can be a reference point for more AI enthusiasts on their way to becoming Space enthusiasts like I am (who are also investors who study companies).

If I get decent interest on this topic, I’m even open to starting an entirely new Newsletter around Space, space-tech companies and investing in them.

I appreciate the restack of this note by Michael Burry and his on-going dialogue with us on these AI bubble related issues. To see the full note go here

For the Interview there are other topics of interest that are related as well:

0:00:00 – Orbital data centers

0:36:46 – Grok and alignment

0:59:56 – xAI’s business plan

1:17:21 – Optimus and humanoid manufacturing

1:30:22 – Does China win by default?

1:44:16 – Lessons from running SpaceX

2:20:08 – DOGE

2:38:28 – TeraFab

Read More in AI Supremacy