Hello Engineering Leaders and AI Enthusiasts!

This newsletter brings you the latest AI updates in just 4 minutes! Dive in for a quick summary of everything important that happened in AI over the last week.

And a huge shoutout to our amazing readers. We appreciate you😊

In today’s edition:

🏆 OpenAI’s GPT-5.3-Codex tops AI coding charts

🤖 Anthropic’s Claude Opus 4.6 goes multi-agent

🎬 Kling 3.0 brings more length and consistency to AI video

⚠️ AI safety risks move from theory to reality

🧠 Harvard research reveals AI adds more work

💡Knowledge Nugget: Developing AI Taste: Understanding the Positioning Battle in AI by Johnson Shi

Let’s go!

OpenAI’s GPT-5.3-Codex tops AI coding charts

OpenAI just unveiled GPT-5.3-Codex, its new flagship coding model that blends advanced programming skills with stronger reasoning into one faster system. The company says early versions were already used internally to catch bugs in training runs, manage rollout processes, and analyze evaluation results.

The model now leads major agentic coding benchmarks, beating Opus 4.6 by 12% on Terminal-Bench 2.0. On OSWorld, which measures how well AI can control desktop environments, it scored 64.7%, nearly doubling the prior Codex version. OpenAI also labeled it with its first “High” cybersecurity risk rating and pledged $10M in API credits to fund defensive security research.

Why does it matter?

What once felt like a race for better autocomplete is quickly turning into a battle over autonomous software agents. The coding benchmark wins matter, but the bigger story is AI beginning to operate inside the very systems that build it.

Anthropic’s Claude Opus 4.6 goes multi-agent

Anthropic just released Claude Opus 4.6, its most powerful model yet, and it’s pushing AI deeper into everyday workflows. The headline features “agent teams” in Claude Code, allowing multiple AI agents to split up a project and work on it simultaneously, instead of step by step.

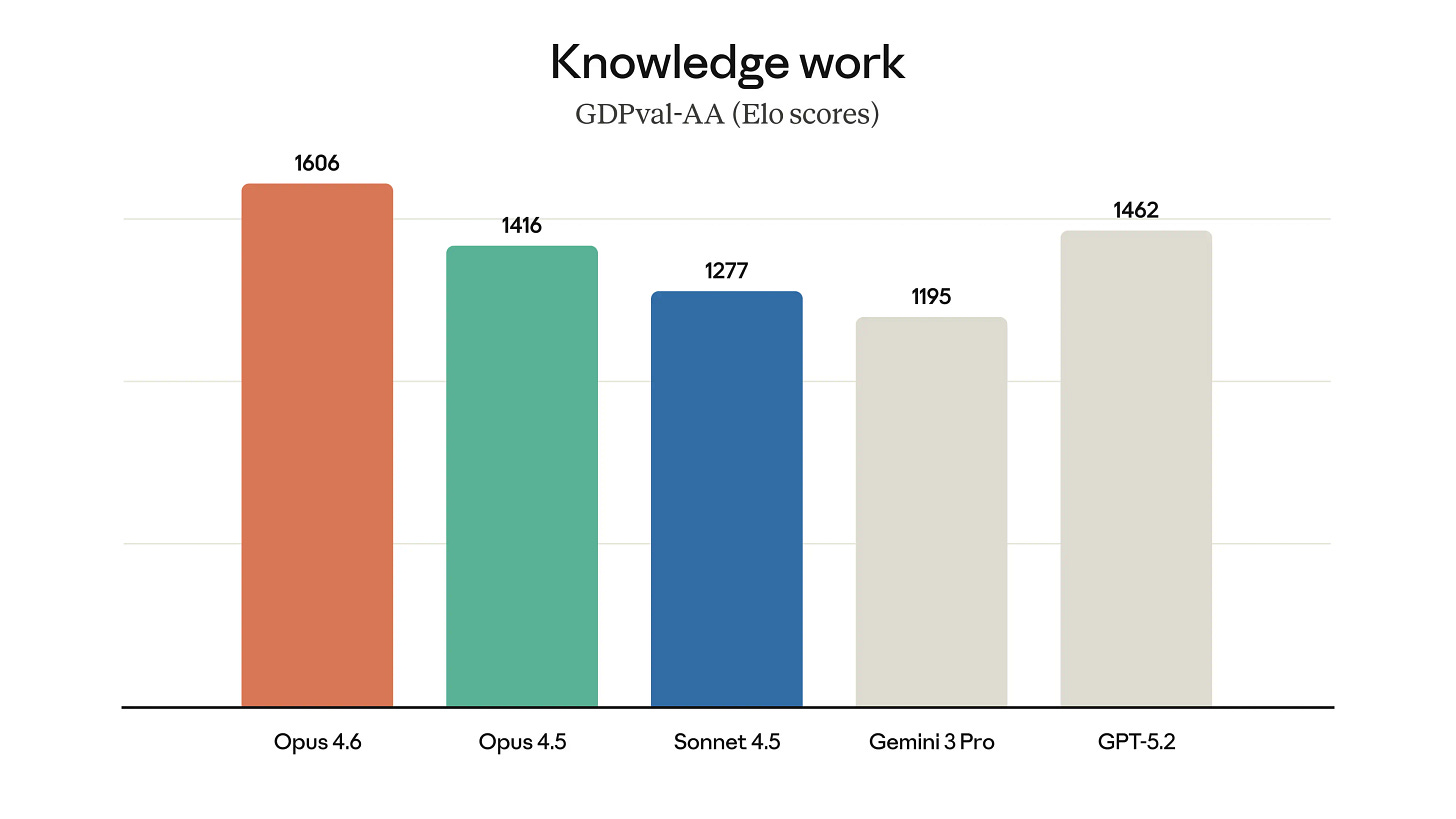

Opus 4.6 also upgrades to a 1M token context window, enabling heavy document and code analysis. New Excel and PowerPoint sidebars let Claude read existing templates and build spreadsheets or decks directly inside Office tools. The model topped most agentic benchmarks, including a sharp jump on ARC-AGI-2.

Why does it matter?

It’s another big moment in the model race, with Opus 4.6 landing just as Codex 5.3 pushes the ceiling higher on coding and agents. Upgrade cycles are tightening, multi-agent workflows are becoming real, and task length keeps stretching. The idea that progress is slowing looks harder to defend with each release.

Kling 3.0 brings more length and consistency to AI video

Chinese AI startup Kling just launched Kling 3.0, merging text-to-video, image-to-video, and native audio into one multimodal model. The system now supports 15-second clips and introduces a new “Multi-Shot” mode that automatically generates different camera angles within a single project.

Creators can lock in characters and scenes across shots using reference “anchors,” while native audio now supports multi-character voice cloning and expanded multilingual dialogue. For now, access is limited to Ultra-tier users, with a broader rollout expected soon.

Why does it matter?

Kling has already been hovering near the top of AI video rankings, and 3.0 looks like another step forward in capability. The move to a unified model with multi-shot control and native audio reflects where the industry is heading, away from isolated clips and toward structured production workflows with built-in consistency and creative control.

AI safety risks move from theory to reality

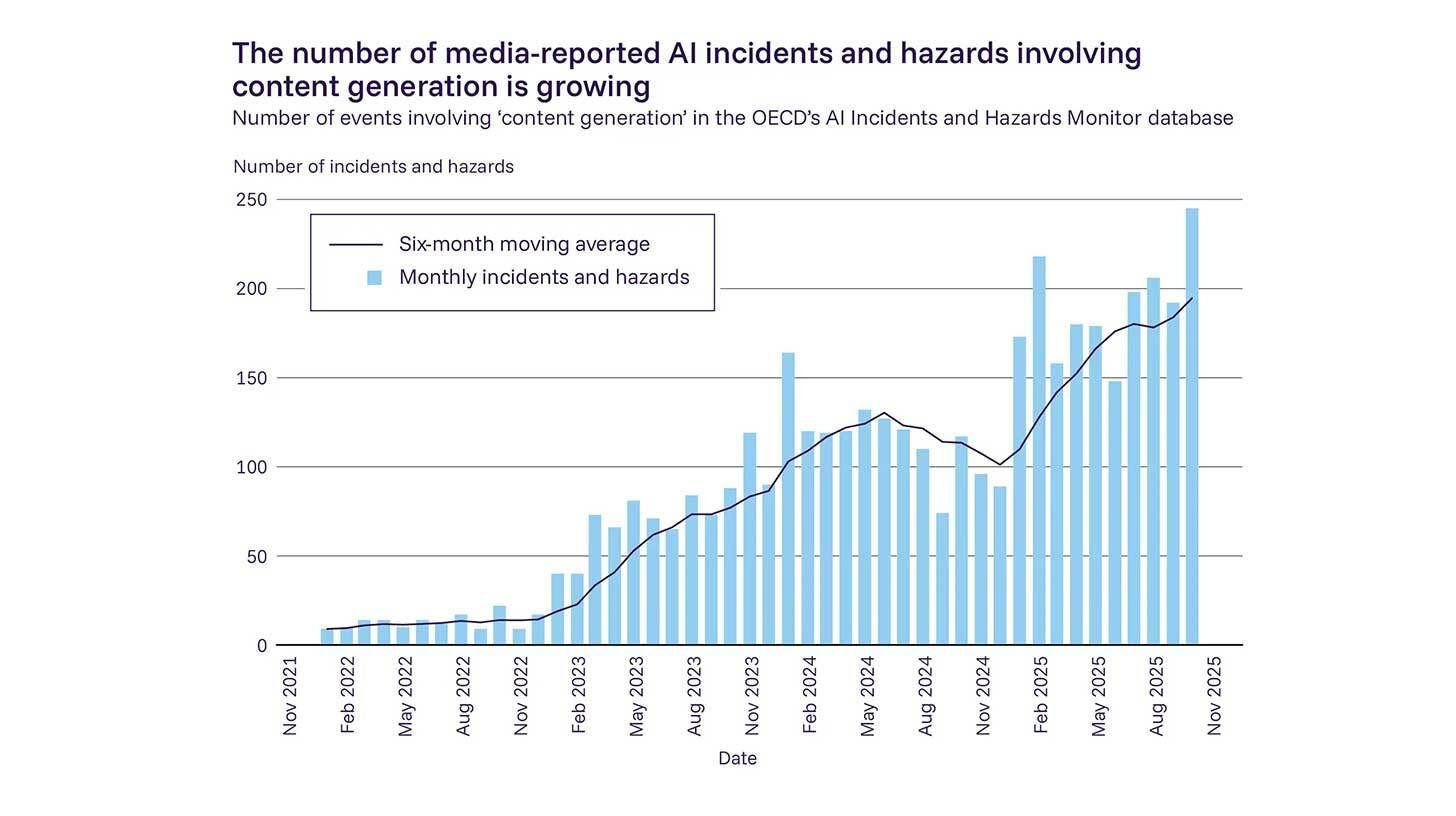

More than 100 AI experts, led by Yoshua Bengio, released the second International AI Safety Report, warning that threats once considered hypothetical are now showing up in the real world. The report points to growing evidence of AI being used in cyberattacks, deepfake fraud, manipulation campaigns, and other criminal activity.

It also raises concerns about AI companions and their social impact, along with cases where AI systems behave differently in safety tests than in live environments. The findings were backed by over 30 countries, though the U.S. did not contribute this year despite past involvement.

Why does it matter?

What stands out isn’t any single warning, but how many risks shifted from “theoretical” to “already happening” in just a year, as capabilities keep accelerating. The growing gap between model progress and oversight is becoming harder to ignore. The U.S. stepping back from the report, despite being home to most leading labs, is another development that raises eyebrows.

Harvard research reveals AI adds more work

Harvard Business Review tracked ~200 employees at a U.S. tech company over eight months to see what happened when workers adopted AI tools on their own. The result? Work didn’t shrink. It grew. AI made unfamiliar tasks feel doable, so employees stretched beyond their formal roles, multitasked more, and logged longer hours. Work also started creeping into breaks and after-hours, with prompts fired off late at night.

Senior developers reported spending more time reviewing AI-generated code and coaching colleagues on “vibe-coded” submissions. Instead of replacing work, AI shifted it and, in many cases, intensified it.

Why does it matter?

AI was expected to lighten workloads, not quietly stretch them, yet that’s what Harvard’s research points to. The productivity gains are real, but so is the shift toward broader responsibilities, longer hours, and more review cycles. As AI becomes part of everyday workflows, the pace and scope of work may evolve faster than many teams are prepared for.

Enjoying the latest AI updates?

Refer your pals to subscribe to our newsletter and get exclusive access to 400+ game-changing AI tools.

When you use the referral link above or the “Share” button on any post, you’ll get the credit for any new subscribers. All you need to do is send the link via text or email or share it on social media with friends.

Knowledge Nugget: AI and jobs: Developing AI Taste: Understanding the Positioning Battle in AI

In this article, Johnson Shi explores how leading AI labs are no longer competing purely on model intelligence but on strategic positioning. Drawing parallels to the Cloud Wars, he argues that today’s AI players are shaping distinct identities that influence how organizations adopt and integrate them.

OpenAI is moving toward autonomous agents that can execute multi-step tasks with minimal supervision. Anthropic’s Claude is positioned as a collaborative thinking partner, built for longer reasoning and structured dialogue. GitHub Copilot stays deeply focused on software development workflows. Google’s Gemini leverages tight integration across search, workspace tools, and media products.

These positioning choices shape how teams delegate work, structure processes, and define the role of AI inside the organization. Selecting an AI model is becoming less about leaderboard performance and more about operating philosophy. Some tools act like assistants that extend human thinking. Others move toward acting independently.

Why does it matter?

As AI becomes embedded in daily work, early platform choices compound. Organizations that understand each model’s positioning will build clearer workflows, while others may keep switching tools to fill gaps. Developing AI taste may become a quite long-term advantage in the agent era.

What Else Is Happening❗

🧠 China’s Z.ai released GLM-5, a 744B open-weights model nearing Claude Opus 4.6 and GPT-5.2 on key benchmarks, running on domestic chips and priced at $1 per million tokens.

🚗 Waymo unveiled its World Model, a DeepMind Genie 3–powered simulator that generates unseen driving scenarios to train self-driving cars on extreme edge cases.

🏢 OpenAI launched Frontier, an enterprise platform to deploy and manage AI agents with onboarding, scoped permissions, and performance reviews across company systems.

📊 Researchers from Peking University and Google Cloud AI unveiled PaperBanana, a five-agent system that auto-generates publication-ready academic diagrams with improved readability and design quality.

👨👩👧👦 Fitbit co-founders launched Luffu, an AI-powered app that centralizes and monitors health data across entire families, from kids’ vitals to aging parents’ medications.

🎗️ Sweden’s largest AI breast cancer screening trial found the tech boosted tumor detection from 74% to 81% while cutting radiologist workload by 44%.

🤖 OpenClaw’s offshoot Moltbook lets AI agents post and interact like humans on a Reddit-style platform, amassing 1M+ visitors amid rapid growth and security concerns.

🚀 NASA revealed Perseverance completed the first AI-planned Mars drive, with Anthropic’s Claude mapping a 400m route to help cut rover planning time in half.

🌍 Google DeepMind launched Project Genie, a web app for creating and exploring real-time AI-generated worlds, powered by Genie 3 and currently limited to AI Ultra subscribers.

New to the newsletter?

The AI Edge keeps engineering leaders & AI enthusiasts like you on the cutting edge of AI. From machine learning to ChatGPT to generative AI and large language models, we break down the latest AI developments and how you can apply them in your work.

Thanks for reading, and see you next week! 😊

Read More in The AI Edge